About This Episode

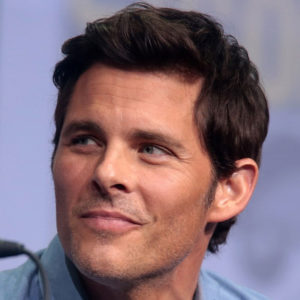

What separates a human from a robot? Is it easy to make the distinction? On this week’s episode of StarTalk Radio, Neil deGrasse Tyson explores the science of Westworld and the future of artificial intelligence with Westworld star James Marsden. In-studio, Neil is joined by comic co-host Chuck Nice, Susan Schneider, and David Eagleman, PhD.

You’ll learn about the show Westworld, which is set in a theme park that allows humans to live out their fantasies by interacting with “hosts” exhibiting various degrees of artificial intelligence. You’ll hear about James’ role on the show, and why even though it seems “human” on the surface, there’s much more underneath. James tells us why he has to bring different levels of consciousness to the role depending on each scene.

We discuss when, if ever, will robots be indistinguishable from humans. To help elaborate this point, Sophia, the artificially intelligent robot, drops in to share her story. Should we treat robots the same as humans even if robots have no emotions? Sophia weighs in on the discussion. She also tells us why proving sentience is nearly an impossible task. We ponder if robots can even have free will, which leads to the question of whether or not humans have free will. We discuss killing in Westworld: is it acceptable for a robot to get killed over and over if they feel no emotion and can repair themselves? Or is it tragically crueler because every time it happens it’s the first time it happens for them?

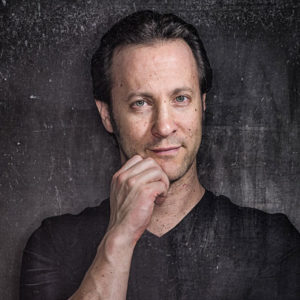

Neuroscientist and Westworld science consultant David Eagleman, PhD, stops by to share his thoughts on the portrayal of AI in the show. We investigate the overall benefits and risks of AI, and answer fan-submitted questions about The Matrix, AI twitter robots, and whether anything is ever truly sentient. Neil explains why he’s not worried about being replaced by an AI – and then we find out what happens when you make a predictive AI read hundreds of Neil’s tweets and try and come up with one on its own. Lastly, we try to understand what “reality” would be like when consciousness extends to robots. All that, plus, we ask the age-old question: would you want to live forever?

Thanks to this week’s Patrons for supporting us:

Kate Sturgess, Jacob H, Bill Farthing, Frank Kane, Tyler Ford, Katie Gared

NOTE: StarTalk+ Patrons and All-Access subscribers can listen to this entire episode commercial-free.

Unlock with Patreon

Unlock with Patreon

Become a Patron

Become a Patron