About This Episode

Can you listen to a picture of the universe? Neil deGrasse Tyson and comic co-host Chuck Nice welcome back Chandra X-ray Observatory data-sonification expert Kim Arcand of the to explore how translating cosmic data into sound lets us sense the universe in entirely new ways.

We break down what sonification is and why it helps both sighted and non-sighted people interpret information hidden in invisible wavelengths like X-rays and infrared. From reconstructing images through sound to rethinking how we perceive radio signals in movies like Contact, Neil and Chuck trace how these translations shift our understanding of space itself.

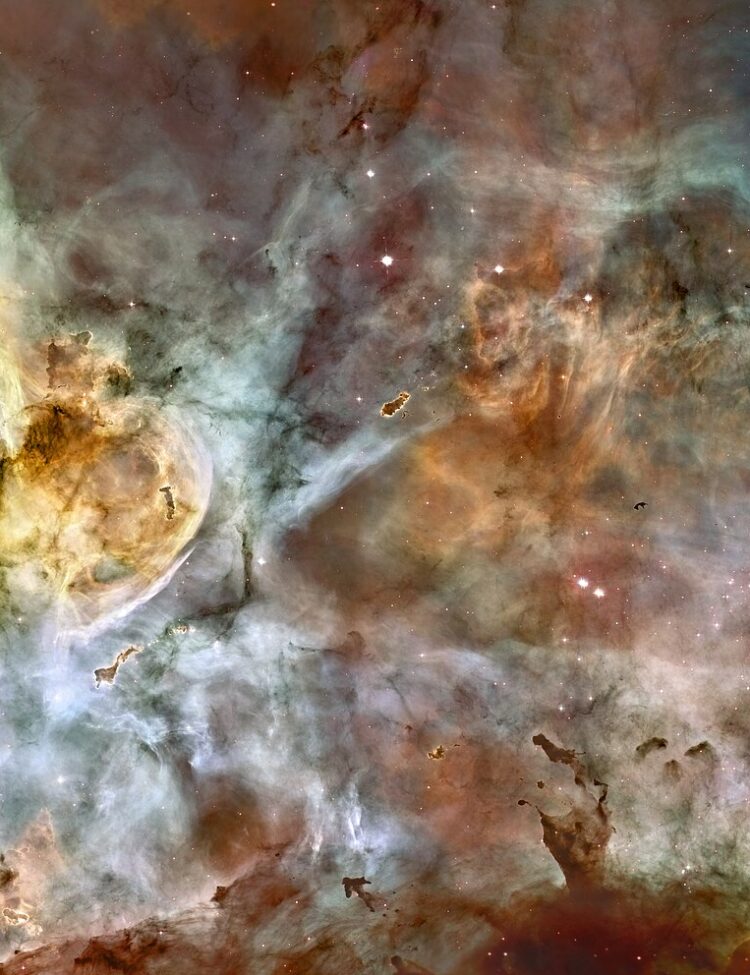

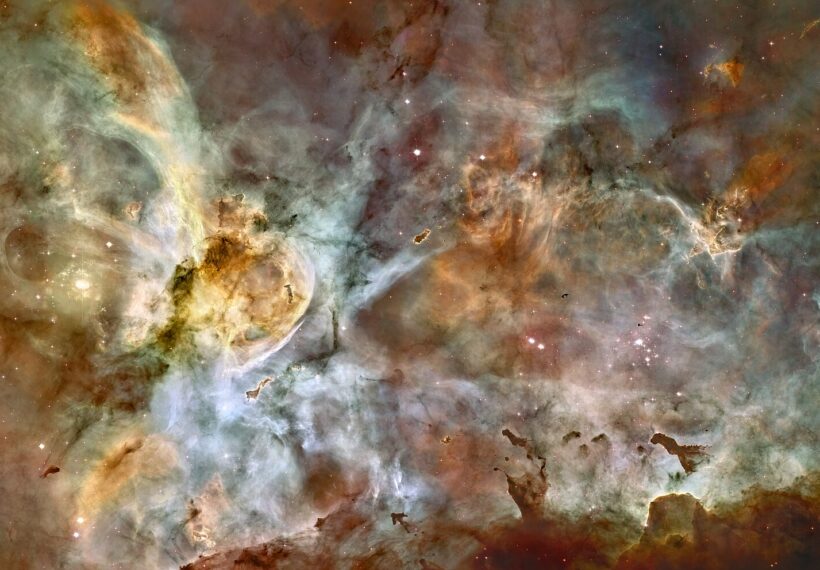

With Chandra’s razor-sharp resolution, we explore how it is perfect for studying black holes, pulsars, and high-energy cosmic events. We break down stellar explosions, the bizarre behavior of Eta Carinae, and how 3D-printed nebulae help scientists analyze structures like the Homunculus.

LEarn about the power of sonification for detecting patterns, color-mapping choices in astrophysics, the eerie “sounds” of black holes, and how visualization can reveal details that raw numbers alone might miss. They dive into VR for space missions, coordinated global telescope campaigns, and explain why every new observatory opens a fresh cosmic window.

Thanks to our Patrons William Ash, Jonathan Bond, Frank Clowes, Aureus Griffith, Steven Tull, Jane, Rachel Banks, Dave, Colin Segovis, Danilo Alcantara, Nick Poulos, Val Teal, jr242, Kenny MacFarlane, LT From DC, A.J. Gonzalez, Aria Vaughn, Damion King, Aluarua Borealis, Thom Sturgill, Justin Perleoni, Elizabeth Fortier, Jagger Carter, FutureFear, AI, Aaron Hardy, GillaBreed42, Leah Stoker, Shayba Muhammad, Micheal Shepard, Jyri Körmöläinen, Christopher Boggs, Robert, Alwaleed Althani, sonja, Stephen Vyskocil, Luc Sr, Gina Boyd, Nathaniel Toups, Pam Floyd, Dent, Arthur Dent, Judie Stanley, Corey Therrien, Jay Lo, Bret, Matthias Beckmann, Girlgeek101, Alek Pyers, Wingo, Ricky G, Austin, Ian Simonson, Jennifer A Ford, Mark Shaefer, Stephen Karlson, Tyler Evans, Gabriel Najul, Evan F, Jeff Soner, Stiven Miranda, Joey Ostos, Lian, Deontae R, Brian Isaman, Chris Kempel, Mike Burns, Alicia Mendez, Dan Dial, Trey Hopkins, Nater Tater, Nata, Lynn Wladen, Allison T, Daniel Hall, Mick JB, Dick Cox, Yonatan Broder, Clayton Smith, DBP19, Justin Cooke, Braulio A Rivera, TurboShark, Tmac, Cory Hack, Nick Haner, Stephy B, Sophie, Will Atwood, Julie Bradley, Greg, Davey Qasem, Jeff, Malerie Corniea, Micki Thomas, and Will P. for supporting us this week.

NOTE: StarTalk+ Patrons can listen to this entire episode commercial-free.

Transcript

DOWNLOAD SRTI’m completely charmed that you can take a picture and listen to it.

You can take a sound and look at it.

This is mixing up our senses for the greater good of science.

Oh, I’m sorry, I couldn’t hear you.

I was listening to the universe.

Coming up, Kim Arcand, data sonification expert for the Chandra X-ray Telescope returns to StarTalk.

Welcome to StarTalk.

Your place in the universe where science and pop culture collide.

StarTalk begins right now.

This is StarTalk.

Neil deGrasse Tyson, you’re a personal astrophysicist, and we’re going to do Cosmic Queries today.

Chuck.

What’s up, Neil?

When we do Cosmic Queries, you come supplied with the queries.

With the actual queries.

With one of our regular, is she a regular yet?

She is a regular at this point.

She’s been on four times, something like that.

Easily, yeah.

Yeah, the one and only Kim Arcand.

Kim, welcome back to StarTalk.

Thank you, I feel like I need a jacket or something, like they do at Saturday Night Live, you know?

Uh-oh, she puts the pressure on us now.

A five-timer.

Yeah, we’re gonna have to get her a master’s jacket.

A yellow or a gold jacket.

All right, she just, all right, maybe we gotta do something like about that, okay.

Thanks for that idea.

Yeah, so Kim, you’re a visualization scientist, emerging technology lead for the Chandra X-ray Telescope, which is, is that run out of Harvard’s Smithsonian Center for Astrophysics?

Yes, the Tanner X Observatory is a NASA mission that is run for NASA by the Smithsonian.

So I am up here at the Center for Astrophysics in Massachusetts.

Well, though I’m not technically there right now, I am, that’s my usual base.

Apart from the cool stuff you do, what has made your career unique, you have pioneered data sonification.

Just remind us what that is.

We spent the whole show on that in our archives.

People can dig that up.

But just for now, just remind us what you do there.

Yeah, so my whole job is just about thinking about our data differently and figuring out other ways that we can visualize it, translate it into sound, which is sonification, bring it into tactile or otherwise like haptification types of environments through 3D printing or vibrational response, and just trying to really dig down into how we represent our data, whether for scientific analysis or for communication and public engagement, both of which are very, to me, worthwhile things to do.

Yeah, so if you’re shifting the sensory experience, this could be highly useful for people whose sensory physiology doesn’t match that of what is average.

Exactly.

We work very closely with the blind and low vision community, particularly on the data sonification and some of our tactile materials as well.

But all of this grows out of like these are valid tools of scientific expression and analysis, right?

So, sonification is actually a tool used in the sciences to work with data.

It’s used by scientists or blind or low vision, but also by sighted scientists because you can just think, right?

If you’re a sighted scientist and you’re looking at an image all the time and it’s very familiar to you, you can almost become numb to it, right?

The data is the data, but you can when introducing new senses or new experiences, new modalities, it can just rewire your brain a little bit different, right?

So something that you might have looked at a lot or felt familiar with, it can kind of like open a new window.

And I love that possibility for additional exploration.

Now, it’s not an accident that you’re working on a telescope that specializes in X-rays.

None of those bands of light are visible to the human eye.

But in principle, someone such as you could exist for every telescope that’s out there, because even for visual imagery that we all just take for granted, that we can see color photos, people who aren’t low-division or blind can’t see them, and that could still benefit from sonification, correct?

Correct, yes.

So, I mean, Chandra really has been the impetus for this for me, because I started thinking very early on in the mission, this is all invisible data, right?

Humans cannot naturally see X-rays.

And the same goes for the infrared data that the web telescope looks at, and for radio data, and even the data that the Hubble Space Telescope is gathering, right?

We are not taking space selfies of the universe, like we are translating that data into a visual representation through extreme magnification, through the translation of these different kinds of light.

And so that sort of possibility that this data does not only have to be visualized, but there are other ways to explore, it just gives you a new kind of like sandbox to play in.

And when you’re particularly in tune to how other people understand data process, data, think about data, it just allows you to try some things that can be really cool in and of themselves, right?

It’s like that cut curb effect, you cut a curb and you can use it if you’re a parent with a stroller, you can use it if you have a wheelchair, you can use it if you’re on crutches, you can use it if you’re on a bicycle, whatever, right?

It benefits multiple people.

And I think that’s one of the really exciting things about thinking of your data differently and trying different multimodal approaches.

It also gives you an opportunity for different manipulation of the data when you’re working with it.

So what came to my mind the first time I read, you sent us an article last year.

And when I was reading it, I thought about sound editors.

And sound editors don’t edit using sound.

They actually look at the wave pattern of the sound on the screen.

And that’s where they make all their decisions to make their edits.

So that’s kind of in reverse of what you’re doing.

But at the same time, it’s the same principle.

It’s like you can manipulate the data differently because you’re quote unquote looking at it differently.

I hadn’t thought about it because in the old days they’d have these reel to reel sound tracks and they would be listening for where the sound would drop and then they’d splice it, cut it, tape it back together to make the final product.

Right.

Yeah, so yeah, it’s very cool.

Yeah, and we’ve actually had an artist take a sonification and reconstruct an image from it.

That piece I think is in a museum outside London right now.

And I just thought that was so creative, right, to take the sound that was produced with that data but then to essentially backstep it until they had an image.

And their resulting image was meant as an artistic interpretation, but it was not super far from the original.

Like it had strong elements of that original data.

And I just, I love those kind of ideas of just play and creativity, which seemed like they would be bad words in the workspace, right?

They’re really not.

It’s just about opening up your brain a bit and just trying to think about things a little differently.

So I have one issue with you, if I may.

Here we go.

Here we go.

Not your fault, not your fault.

So, in the movie Contact, Yeah, I love that movie.

which was based on the novel by Carl Sagan, the lead protagonist, Ellie Arroway was her name, the character’s name.

She was an expert in the search for extraterrestrial intelligence, which is normally done with radio telescopes, and she would sonify the radio signal into headphones, and she’d be listening on headphones to the radio signals.

And here’s my issue.

The word radio, people think of as sound, but radio waves is electromagnetic energy.

And so it makes people think that the aliens were sending us sounds, but they were not.

They were sending us radio waves that we converted to sound.

We end up making a one-to-one correspondence between the word radio and sound.

Right.

And that movie didn’t help disavow people of that association.

So I blame you for that.

It’s one of my favorite space movies, but that is one issue that has always took out for me as well.

However, I will also say, you know, it is very commonly thought that these images are like direct snapshots as well.

So it’s just about being very transparent in what you’re doing, really describing what you’re doing in a way that’s clear and makes sense and just kind of reiterating, you know, we are not capturing space selfies and we are not capturing like space recordings.

These are translations, like you might translate English to Mandarin, right?

You have to have a way to interpret it.

So reminds me, this is a Cosmic Query, so I want to make sure we get to the questions.

But catch us up again on what the Chandra X-ray Telescope does, which is one of the great observatories, along with Hubble and a few others, each in their own band of light.

Remind us what Chandra does that other telescopes can’t do, and what it sees that other telescopes can’t see.

Yeah, so Chandra is kind of like part of that Super Friends team, if you will, with Hubble, with Webb, with these other telescopes.

And Chandra is our sharpest X-ray view, right, of that high-energy universe.

It has still to this day, after 26 years of being in operation, you know, it’s looking at things like exploding stars, it’s looking at things like clusters of galaxies and the hot gas that envelops them.

It’s looking at young stars and the sort of X-ray temper tantrums that they can have.

It’s looking at all of these very energetic phenomena across the universe.

It’s exciting because Chandra has such exquisite resolution.

It’s half of an arc second, and an arc second is just like a tiny unit of angular size.

If you like 3,600 arc seconds in a degree, essentially, you’re just looking at one half of an arc second.

It’s kind of the equivalent of if you’re looking at a dime from a few miles away.

It’s really amazing.

So that kind of helps Chandra be, if I can refer to my past as a biologist, as a microbiologist, it kind of allows Chandra to be like the X-ray microscope of the universe.

It really can dig down very deep, very sharp.

Yeah, very cool.

Love that analogy.

It’s like Chandra’s resolution is comparable to Hubble’s, but it’s looking at high resolution.

It’s looking at those high resolution, the X-ray photons in the universe of which there are not as many as there are, the stars, for example, though they give off X-ray light, they are not giving off as much output as they would for infrared or optical light, typically, unless something really wild is happening.

So there tends to be a slight Darth of X-ray photons in the universe, which just makes it really challenging to do.

And Chandra’s engineering was such that you can’t use normal mirrors, you have to use these barrel-shaped nested mirrors of which Chandra has four pairs, so that you can just skim the X-rays down.

It’s like it’s grazing incidents, it’s like skipping a rock across a pond, right?

And that lets you then focus them down at the detectors to be able to capture that.

That itself was an engineering discovery, perhaps, right?

Oh, yes.

That you can focus X-rays that way.

Because, you know, we have a lens, you put light through it, you can make an image on the other side.

That’s what magnifying lens is.

X-rays don’t do that in glass.

You got to be more inventive about it.

I just like the fact that you said it was part of the Super Friends.

Oh, the telescope?

And you made a DC reference, and the only DC character that has X-ray vision is Superman.

So that makes you guys the Superman of the Super Friends.

I guess so.

I see what you did there.

Yeah.

Chandra itself.

Plus, you guys are good at finding black holes, black holes that are in binary star systems.

Yes, Chandra is really a black hole hunter.

Why is Chandra so good at finding black holes?

Well, the exquisite resolution and the ability to peer through that gas and dust that can clog the hearts of galaxies that Chandra gets to see.

So Chandra is looking at things like Sagittarius A star, the supermassive black hole in our own galaxy.

It’s looked at that over and over and over again.

And one of the benefits of a mission with such longevity is that you get to look at something over time and build and build and build that data for a really deep snapshot.

And so with like Sagittarius A star, like we’ve seen it snacking on small snacks, like a little asteroid here and there, an after school snack.

We’ve seen it like devouring a larger Thanksgiving size meal with a big fat star.

We’ve been able to see that different things happening over time, which is I think really lovely.

But yeah, black holes are one of my favorites.

I mean, but it’s rendered visible because as it gets very hot spiraling down, it radiates X-rays.

That’s how that’s the mechanism, correct?

Exactly.

And again, that’s that high energy phenomena, right?

There’s not as many high energy phenomena, things that are, you know, burping out super high energy particles, things that are exploding, things that are colliding as there are, say, normal stars.

But there’s still an awful lot to look at throughout the universe and X-ray light.

So there’s just always something new.

How about pulsars?

Are they something that you guys pick up a lot on or?

Yes, pulsars are fabulous because they’re kind of like, I kind of think of them as like, you know, zombie stars, stars that have kind of come back to life, a star that was, you know, massive, its core collapsed.

It just, star explodes, it burps all over the place.

That’s just amazing.

And what’s left is this star core about the size of Manhattan, perhaps, and it can spin really fast.

And that kind of, again, incredible high-energy phenomena is a perfect thing for Chandra to look at.

I can’t even count, I’d say, the number of pulsars Chandra has looked at.

I’d actually be interested in that, but a lot, a lot.

If we didn’t have an X-ray eyes on the skies, we would have no idea these phenomena were happening, mostly.

I mean, unless it also gave us visible light or infrared, there’s a certain blindness we have without an X-ray telescope.

Exactly.

I like to liken it very fair.

I mean, I like to liken it to the Wizard of Oz scene, when the tornado is over and Dorothy steps out of the black and white, opens the door and it’s like this technicolor universe now.

To me, Chandra and other telescopes across wavelengths are providing us that gorgeous technicolor experience that we didn’t have access to 20, 30 years ago.

This is relatively new.

We’re able to get more and more of the color of that universe, if you will.

Now, we get to go down the yellow brick road and it’s really lovely.

I first saw Wizard of Oz on a black and white TV.

I had no idea.

Oh, wow.

Anything different happened when she stepped through the door.

Oh, wow.

Really?

Nothing was in color for me.

On a 19-inch black and white living room TV.

That’s pretty cool.

Did the movie feel boring without the color?

No, I didn’t know that there was something.

Yeah, there was nothing.

You weren’t missing anything because there was nothing to miss for you.

Whether I said black or white, I got a black and white TV.

Why would I think anything’s different going to happen?

Right.

That’s very cool.

That’s how we were before we had all these telescopes, too.

We didn’t know what we were missing.

We didn’t know what we didn’t know.

You didn’t know what you didn’t know.

Right.

Is the universe ever going to get a brain and a heart?

I don’t know.

That’s a good question.

Yeah, we’ll put that on the list.

So Kim, Chandra has also studied Ada Carina, which is quite the spot for action in our galaxy.

Nice.

Yeah, tell me more about that.

It’s very near to us.

It’s only like 7,500 light years away, so that is in our sort of local galactic neighborhood, if you will, well within the Milky Way.

Afternoon trip to the store in afternoon.

Exactly.

The Cosmic Store, but yes.

You can Uber there.

Yeah.

Is Aida Carina the product of a dead star, or is it a star-forming region?

I always forget which that is.

The general area around it is a star-forming region, but the star system itself is like a massive binary, though it could be, there could be three, but I’m not sure if it’s two or three these days, but there’s at least two pretty massive stars, like I want to say 30 and maybe 90 million times the size of our Earth, so very large stars that are like hanging out together.

And one of them goes through these massive outbursts, which created like a near supernova event because it was incredibly bright and it was witnessed from Earth in the 1840s-ish.

It was called the Great Eruption.

And then it dimmed and now people think that it could explode because it has been losing its material, if you will, over time.

And telescopes like Chandra, like Hubble and others are able to monitor it over time, which again for longevity missions really provide you with a fantastic way to see that change over a human time scale.

There’s no substitute for that baseline.

Yeah, exactly.

Like the course of my son’s life, essentially, like we can see changes in that star.

And Chandra is detecting like the really powerful stellar winds from that explosive or near explosive event.

And then Hubble is capturing some of that cooler gas and dust that’s kind of created this bipolar structure called the, I think it’s the Homunculus Nebula, right kind of around the star system.

And so we do have a 3D model of that, that folks can take a look at on the Chandra website, chandra.si.edu/3dprint.

Oh, interesting.

And can we rotate it on the website as well?

Yes, you can.

There’s a little video that plays it going around.

And so you can kind of see there are these two lobes of material.

And then the Chandra material, which is not in this 3D model, kind of hugs it.

It kind of looks like a giant space croissant of high energy material wrapping around the homunculus.

And then the two stars or maybe three are buried like inside.

Did she just say space croissant?

I know.

And I’m so hungry right now.

I know.

I’m kind of hungry too, which is why I came to the moment.

But it’s like a hug.

It’s like a hug around the homunculus, which I think is very cool.

But so these types of 3D models, like these are actually done for scientific analysis.

And then we’re able to 3D print them so that scientists can study them and understand them and display them.

But also importantly, so people who are blind or low vision or people who just really like to study tactically or learn tactically have access to a different way of knowing.

So okay, so I think we, let’s get on to some Q&A here.

All right.

Chuck, you got the list.

And guess what?

They are ready to go, our listeners are.

I’m so impressed with the questions that people ask.

Listen, these people are not playing around.

Yeah, yeah.

I’m like, yeah, yeah.

All right, let’s start off.

And they’ve been queued that Kim is our guest.

As a matter of fact, they have.

They completely know this, okay.

These are specifically questions for you, Kim.

Love that.

Yeah.

The audience knows who you are, and they’re very excited to ask you questions.

All right.

So this is Russell Harvey.

Russell is from Colorado, and he says, how does sonification of X-ray data from Chandra help us understand cosmic phenomena like black holes or supernovae in different and new ways?

So what are you doing of finding that is proprietary to you, that you can say, oh, yeah, that was Chandra?

That’s an important question, because otherwise you’re revealing just what is already known that a sighted person would see.

So do you have some insights there?

Yeah, like some gossip, like, girl, you know, I heard from Chandra.

You know what I heard from Chandra?

Well, I can say, let me start out generally, right?

So sonification helps us pick out patterns in data that can be hard to see.

So they’re often particularly helpful in things like studying gravitational waves or understanding variable stars and that sort of thing, where you have to pick out patterns in a lot of data that can be hard to see in only an image.

So you can think of, like, rhythmic flickering.

So for black holes, at least, there have been, like, additional thoughts about things like the Perseus cluster, because when that resulted in a Perseus cluster that showcased that there was this massive, supermassive black hole at the core burping out into the hot gas around it, causing these sound waves, these pressure waves, which are sound waves, and that that note is about a B-flat, about 57 octaves below middle C.

Well, listening to the sonification, like bringing that note, if you will, back up into the realm of hearing by taking the image and scanning those waves in the image so that you can hear them through sound.

What I have heard is that researchers have noticed that there were additional ripples that had been missed originally.

So that is something that I haven’t seen a paper on it or anything like that, but I have heard discussions that that is the sort of thing that could be really useful to do more of this idea of being able to find small details that either wasn’t as obvious in the visual or numerical data or…

And you would have overlooked it.

Exactly.

And that’s the thing, like when you look at something a lot or if you’re just staring at something, you’re getting all of the data at once.

When you’re listening to it, you’re actually given the gift of time, right?

So you have your brain responds in a different way because you’re getting that data sort of parsed out to you based on the tempo of the sonification.

And I think that’s kind of an exciting space to do more experimenting.

And just to remind us, the sonification is basically a scan of the image where each row has some acoustic, as the scan comes across a star or an object that has x-ray flux, does the pitch go up or just the volume go up?

But what typically would happen there?

Yes.

So it’s a mathematical scan across the image or from the center out or all of that.

And like pitch, tempo, volume, instrument choice, like all of those are the variables that we’ll use in order to describe.

Instrument choice.

I love it.

Wow.

Look at that.

I want a saxophone in space.

Well, we do.

Yeah.

So if you have like a heavy-duty data set that’s got a lot of different kinds of light, choosing disparate instruments to assign to the data that you can really make, you can really tell what’s playing when, lets you to kind of help identify different parts of the image or the information that you’re trying to decode.

Is there any use of an oboe or a didgeridoo?

Yes, I would say all instruments welcome, including voices.

Yes, I think all instruments are welcome.

What a great answer and thank you, Russell.

All right, this is Hugo Dart.

Pardon me, Hugo, Hugo Dart.

Hello, Dr.

Tyson, Dr.

Arcand, Lord Nice.

This is Hugo Dart from Rio de Janeiro, Brazil.

Ah, chook-a-boom.

Anyway, he says-

Do you realize they have one of the largest aerospace industries in the world, in Brazil, and all you can do is shake your ass when you say Brazil?

I did not know that.

Do you know why?

Because in Carnival, they do not show aerospace.

That is a valid point.

Yeah, it’s valid.

Yeah, okay, you got me on that one, okay.

Hugo says, I’m with my seven-year-old daughter Olivia, who is also a very big StarTalk fan.

So hello, Olivia.

Here’s our question to both of you.

Why will space freak us out?

Oh, because you have a book.

Kim has a book coming out.

Yes, that’s such a sweet question.

Called, Why Space Will Freak You Out.

Oh, get out.

And it’s a book that is intended to be like parent-child combo.

Exactly.

Like a family reader kind of thing.

How much do you pay Hugo to send in this?

I didn’t.

I swear.

But these questions are so lovely.

I mean, my goodness.

Kim, this is your ninth book.

So tell us about that book.

Yeah, this is my first sort of kid family reader type of thing with my amazing co-author, Megan Watzke.

And it’s meant to be a little fun.

My husband loves horror movies.

I think it’s because he was born on Halloween.

And so I’m not a fan of horror movies, but he watches a lot and I think it just kind of gone into my brain.

So my humor is a little darker, I would say.

And this idea of like finding fun in the creepy and the weird and the strange and the exotic and the universe is something that’s just kind of been filtering in.

And so it’s a fun look at things because it’s like, what is weird and creepy in our own solar system, in our own galaxy and then like well beyond.

So you can think of things like exoplanets, like the exoplanets that we have found so far.

Some of them are so gosh darn weird.

Like, you know, world where it rains glass sideways at like 5,000 miles an hour, lava worlds, frozen worlds, dead worlds going around zombie stars.

I mean, it just, it sounds like science fiction.

And so it was just kind of an opportunity to think of some of those fun things, those weird things, those freaky things, and just talk about them in a way that’s hopefully not too scary.

But I guess to answer the question, like really it’s just that space is huge and mind boggling and extreme and weird.

And we’re very lucky to live here on our cute little rocky planet where things are relatively, well, not weird.

Kind of, Chase.

Comparatively speaking.

Comparatively speaking.

Speaking of which, here’s a follow-up from Olivia.

Okay, actually it’s from me.

I read this or heard this someplace, but please tell me, how far do we have to go towards the sun or away from the sun where we don’t have this planet anymore?

Venus is to our left and is 900 degrees.

Mars is to our right, once had water and does not.

So we are sandwiched in between two wholly inhospitable planets.

So you’re asking what you’re asking me now?

So that’s what I’m saying.

Could we nudge like a mile to the left or a mile to the right kind of thing?

Yeah, that’s what I’m saying.

So how far could we go towards Venus and still live?

And how far could we go towards Mars?

And not freeze?

Surely people who know this, I don’t have that answer.

But Earth has a certain recovery mode that could make up for small changes.

You can find a new equilibrium where it is.

It can still function.

But not too far.

You don’t want to crush your luck.

Kim, do you have any insights there?

No, I mean, it’s a great question.

And I’d kind of love to know the answer.

But the I mean, I feel like some the biology side of me is kind of like some life forms on earth, probably not humans for a long time, could adapt to slightly more extreme temperatures either way.

Like we’ve seen with tardigrades, right?

Water bears, they can live in pretty extreme environments.

So there’s some possibility that if we nudge left or right, I’m using, which are not the right directions.

But you know what I mean?

Closer to Venus or closer to Mars, that there would be something that could adapt and survive that.

But I feel like humans would probably not be on the list for very long because food sources, other things would be affected so quickly, at least I think.

OK.

And by the way, there is a unique left and right in an orbit, just the same way Rive Gauche, that’s the left bank in France of the River Seine, whatever the river is there.

So, the left is the shore that is on your left when you’re moving with the river.

That’s a unique left.

That’s a unique left.

Yeah, and then a unique right.

So when I say Venus is on the left, we are the river of Earth passing between Venus and Mars.

Sweet.

That’s pretty sweet.

Only Neil can make the SI.

Only Neil can make an orbit sound like sex.

I know, exactly.

You know?

All right, here we go.

This is Jeffrey C.

He says, hello, SMEs.

My question is-

SMEs?

What’s SMEs?

I don’t know.

Subject Matter Experts.

Oh, excuse me.

So he’s just off the YouTube.

He was like, screw Chuck.

I never heard it abbreviated.

SME, subject matter expert.

Okay, thank you, Kim, for filling me in on that.

He says, my question straddles the line between science and engineering.

My understanding is that X-ray observatories employing grazing incidence mirror designs primarily to minimize scatter and reflection losses rather than to optimize for minimal wavefront distortion.

To me, achieving diffraction-limited imaging in X-rays sounds wicked awesome, but it seems like current X-ray telescope designs prioritize maximizing the collection of photons at the detector instead.

What’s the reasoning behind that?

Please teach me more.

Now, first of all, let me just say this.

Don’t nobody need to teach you nothing.

Who’s going to teach you?

Look into that question.

This guy just gave us the anatomy of the telescope in such a way that you show off, you big fraud.

Like, please tell me, how exactly do we get the photons to the detector?

You know damn well.

And by the way, I love it.

I don’t know about birth and death.

I don’t know about the photons on a detector.

But Kim a moment ago explained that she didn’t use the word grazing, but that’s what it is.

Yes, we were talking about the skimming.

It’s skimming, with the rock on the…

The fellow raises a very important point.

Jeff from Boylston, Massachusetts.

We’re playing with you, Jeff.

Which by the way, I have to give the shout out to Massachusetts fellow New Englander with the wicked awesome.

That was perfectly, I mean, I just love this audience so much.

Yeah, wicked is a very New England expression.

Oh my God.

Yeah, wicked.

Ah yeah, very wicked, wicked smart.

Wicked smart, Kim Arcand is very wicked smart.

She came here in a car.

So I guess I would say like there’s kind of two issues at hand, I talked about a little bit earlier already, so maybe it’s sort of helped, but X-ray photons, very energetic, very incredibly difficult to focus, right?

This is high energy, it is a bullet going through a wall kind of thing.

So because of that and needing the grazing incidents and having to like skip down across a pond, as we talked about earlier, there’s two things to consider, the physics and the engineering limits.

I’m not an engineer, so I cannot speak to this in detail.

But I will just say that we already for Chandra had to have mirrors like really polished, incredibly smooth.

Like if you smooth down Colorado, Pike’s Peak would be like maybe an inch tall, right?

Like it’s a really incredible accomplishment or feat that American engineers had to do just to get the Chandra mirrors to like near atomic levels, never mind what you would need for that kind of diffraction limiting imaging, which would be like atomic scale perfection.

And of course, like the alignment in them as well, and then sending it up into space, into that like harsh, cold environment where we would have to operate like perfectly.

So there are some engineering limits there that I would say.

USA, USA.

Yes, yes.

But also it also goes back to like that photon starved universe that I mentioned earlier in the show, right?

X-ray sources are typically a bit fainter than all of the optical or all of the infrared data that can be gobbled up by these light buckets, right?

And so the idea of building an observatory that would be able to collect enough of them, that is kind of like, that has been the priority, right?

So maximizing your collecting area and getting, I don’t want to say good enough, because Chandra’s resolution is incredible to me.

But like being able to get there with Chandra’s arc second resolution was an absolute feat.

So getting to go beyond that, it really becomes like true engineering and physics issues that have to be fixed by bigger brains than mind.

So all I can say is wicked awesome question.

I don’t exactly have an answer, but I love that you asked it.

But it comes down to, like you said, you don’t have all that many photons to work with.

So you can’t prioritize resolution.

What good is your resolution if you didn’t have the photons?

You got nothing to collect.

So I have to agree to what was supposed there, that it’s an engineering decision to maximize your access to photons than the resolution itself, even though you still have very good resolution.

Right.

Definitely.

Yeah.

Okay.

Hey, well, by the way, Jeff, we love you, you big show-off.

Definitely.

That was a great question.

All right, this is Mario Funes, I think Funes or Funes.

Mario, I’m going to go with Funes.

How do you spell it?

F-U-N-E-S.

Funes.

Funes.

Funes.

Yeah.

All right, Mario Fun, okay.

This is Mario from Fort Lee, New Jersey, way right across the river here.

Across the moat.

Oh man, that was rough, bro.

Why you got to do that?

Fort Lee is across the Hudson River from Manhattan.

Yes, okay.

Yes it is.

But I love the moat because, you know, I live on the other side of the moat too.

X-ray astronomy often relies on assigning colors to energy bands that are invisible to the human eye.

How do you strike a balance between scientific fidelity and aesthetic impact when choosing color palettes and have audience reactions ever led you to rethink your visualization approach?

Does that also apply to sound?

How do you get your baseline for sound?

That’s not what the person asked.

Okay, but I’m just throwing that on top of Mario’s question.

No, pay the Patreon fee and then you can ask the question.

I’m only asking half a question.

That’s $2.50.

I can give you $2.50.

I’m trying to do two for one.

Two for one.

Now, this is a great question because I love talking about this topic because it’s so useful when you can kind of underline the idea that these are visual representations of data that is invisible to human eyes, right?

And we’re capturing that information.

We are translating it into the visual representation.

But those are human beings doing that process using software that’s been coded by humans and making choices that that human thinks are the best choices.

But there’s obviously going to be a wide range of possibilities.

So for color palette specifically, we have pretty much settled on like an RGB red, green, blue for low, medium, and high energies for our color coding typically.

So that means often Chandra images if combined with say infrared or optical kinds of data, Chandra will often be colored in like blues and purples.

And then say the Hubble data in the greens and the web data in the infrared in the reds, right, that’s often what we’re doing because we have found that that does tend to make an image that is both aesthetically pleasing, but does align with that scientific information.

However, there are many times when the color palette has to get thrown out of the window because the science says so, right?

The data says so.

If you’re getting a massive data set and you are trying to pick out different kinds of chemical emissions in a supernova remnant and you’re codifying where the pockets of iron and the silicon and the sulfur and the calcium and the oxygen are, you have to go into a different type of color scheme.

So I guess the shortest answer is that we do have a kind of standardization, but the science drives the story, the science drives the visual, and then we adapt based on the needs of that.

Now, to the last part of the question about rethinking visualization approaches.

Yes, so I’ve actually done studies because I like people as much as I like space.

I like to learn about both of those things because we are not just studying space as robots, like we are humans studying the universe around us.

And so to me, at least, it’s just as important to understand human perceptions and human understandings, human meaning making, right, of that type of data.

So we have done studies on looking at how humans respond to our visualizations based on different kinds of color codes and different kinds of aesthetic appeal.

And the interesting result was that it didn’t actually make a difference.

Even if some of the color schemes would have been like a bit like, oh, to me, in general, any color scheme that still got across the data that was described like what we were doing, that was the winner and it didn’t exactly matter how it looked.

So people appreciated being able to understand what the colors meant, I guess is the point.

Now, there has also been a case that I remember so, so specifically when it came time to do the bullet cluster, which is a cluster of galaxies and kind of like the textbook example with Chandra and Hubble that helped show this direct proof of dark matter.

It showed the separation of the hot gas from Chandra and then the normal matter, sorry, the normal matter, if you will, from all of the dark matter, which was gravitationally mapped, right?

And it was all kind of with the Hubble data showcasing where the galaxies and everything were located.

And for that image, typically, the Chandra data would have been in blue as the highest energy in the data set.

But when we first made the image, we did a little testing of it.

And it just, when trying to sell the story of all of this hot matter, the separation from the dark matter, it just was not vibing for people.

So we actually inverted the color scheme, put Chandra in the pink reddish color, and then put the dark matter map in the blue.

And that worked better for people for that specific scientific discovery or scientific expression, and has since been kind of like a de facto for how we color code those examples of galaxy clusters that are showcasing the separation of normal and dark matter.

By the way, for anybody interested, I don’t know the name of it, but Neil gives one of my favorite explainers on how we take any bandwidth and make it so that we can look at it with our human eyes.

And it is a fascinating explainer.

Oh, really?

Okay.

We’ve gotten all about that.

It’s in our archives.

Yeah, it’s one of my favorites.

Actually, really?

You never told me that.

Yeah, you did an exceptionally incredible job of that, which is redundant, but it was so clear and it was something that secretly I never understood.

I never understood.

And then after that explainer, I was just like, all right.

That’s why they’re called explainers, I guess.

Yeah, I guess so.

And Kim, it should be fair to declare that if you assign an RGB to a low, medium and high energy bands, I think we can, with honesty, say that if the human retinal sensitivity were shifted to that realm, it is the color picture you would see.

Yeah, they do tend to call them true color representations in that RGB set up for that reason.

I like to think of all of them as representative color, right?

Like we’re not the mantis shrimp being able to see all sorts of colors all over the place, right?

But I do think it is fair to say that that is a more true representation.

Yes.

All right, time for a few more.

This is Neil Cameron.

Neil says, hello, Lord Nice, Dr.

Arcand, Dr.

Tyson.

Neil here from Asonia, Connecticut.

I’m gonna keep this simple.

Black hole sonification.

Do they sound like?

Or more like?

Bloop.

And.

How do you read that off of this?

Oh, cause he said, do they sound like Godzilla roar or just a little bloop?

So I gave him my best Godzilla roar, to work for Neil.

And then the little bloop is bloop.

Isn’t it just the gas swirling that we can sonify?

Can sound exit a black hole?

This is a great question too.

I love all of these questions.

These are the best.

So, yes.

So nothing can escape a black hole, right?

Like sound can’t escape, light can’t escape.

So yes, we are not holding up a microphone to capture sound of black holes, but black holes do have this potential, supermassive black holes in the center of galaxies do have this potential to, as I mentioned earlier, kind of like burp out into the surrounding hot gas around it and make these pressure waves or the sound waves.

And so we’re taking that information, which we can see in images by the rippling, mathematically mapping it to sound.

So we are choosing sounds that make sense for it.

And we have done one sonification of a large population of black holes in the Chandra Deep Field South, where we actually did apply little like boop boop kind of sounds.

It sounds a little bit like Imogen Heap was playing it, because we were trying to showcase a massive field with thousands of black holes and we assigned the sound based on the energy level of those X-rays.

So we just chose low, medium and high sounds for those X-rays.

So I don’t know if that answers your question.

In the pressure waves, they actually are literally sound waves.

They are literally.

So the Perseus Cluster is that B-flat that I mentioned.

M97 also has them and it’s slower.

I don’t know what the actual note equates to.

But these supermassive black holes do make these sound waves.

They’re not singing exactly.

They don’t have vocal cords.

But they are by that sort of burping out into the area around them.

It’s all about the environment that black holes live in.

That’s going to give you the interesting, whether it’s a burp or some other kind of thing that we’re detecting.

And a big part of that question was, is it the swirling of gas?

And the answer is yes.

Yes.

Because the black hole is otherwise not talking to you.

Right.

Exactly.

Chuck has the best ever imitation of a black hole.

Oh.

I don’t know.

But I’m sure…

No, no.

You’ve done it.

Have I done it?

Yes.

Does it sound like this?

Yo, what’s up?

No, that’s not it.

It’s not someone hanging out in the shadows ready to mug you.

No.

Oh, no.

Oh, no.

This is the black hole.

Hey, hey, hey.

Hey, I’m very hungry.

Otherwise, I will lose some weight if I stop snacking in between my snacks.

I forgot all about it.

Well, you got a good memory, man.

That’s the best black hole I ever heard.

That is from…

Black holes could talk.

That is from so long ago.

That’s right.

I’m getting myself.

OK, here we go.

Rachel Ambrose says, Hey there, this is Rachel from Austin, Texas.

First, I wanted to say that the Chandra X-ray Deep Field sonification has been my ringtone for over a year now.

No way!

So thank you so much for that.

Totally way.

That’s cool.

Do you have downloadable ringtones on your website?

So I don’t think we put them into ringtone format, unless someone on my team did that, I didn’t notice.

No, she’s just made it her ringtone.

You make ringtones.

But we do have little snippets of the sound available on our website to download, so I guess if you can make your own.

I never thought to do that, it’s so lovely.

Wow, okay, very good, very good.

She says, my question for Kim is, if you could sonify one cosmic event that hasn’t been done yet, something you think would blow people’s minds, please tell us what would it be?

That’s tough.

My instinct, because I just love every data set pretty much, but I think something in the time domain, something that we’re seeing change over time, that’s something that I would really like to do some of, more of, like supernovae changing over time, gamma ray bursts cooking on and off, tidal disruption event, just anything that changes over time on like a human scale, if you will, that we’ve been able to capture.

That’s something that’s kind of been on my list for a while.

I don’t know if it would blow people’s minds.

Is that because the imagery would be composing for you?

Yeah.

So it’s already giving you like a sort of temporal flow, if you will, like the data is changing at a rate that you could track and then represent in some interesting way.

And I don’t know, I think that would be very cool.

I don’t know if it would be mind-blowing, but it’s definitely something I would like to try.

That would be high on my list to work on with system sounds.

The time dimension is really what you’re referring to there as…

Exactly.

Yeah.

Okay.

Nice.

Hearing data can like draw your attention to different patterns.

Like we’ve talked about that a little bit already, that your eyes can overlook.

And so being able to sonify that changing data, I think could be really powerful.

All right.

Well, thanks, Rachel.

Wouldn’t it be cool if it sounded like scat music?

Like, you know, like a little Al Jarreau would be kind of cool.

You know what I mean?

Would be very cool.

Yeah.

Yeah.

Bow, bow, bow, bow, bow, bow, bow, bow, bow, bow.

Like…

This is Colin Zwicker.

He says, hello, Dr.

Tyson, Dr.

Arcand.

I’m Colin from Switzerland, and I have a question for Dr.

Arcand.

Well, thanks, Colin.

That’s what we’re here for.

You’ll cut nobody any slack.

Has there ever been a moment when a visualization revealed something that surprised you, something that you might not have noticed if you only looked at the raw numbers?

Yes, for sure.

I mean, and that is the kind of beauty of not just visualizing, but thinking about how to visualize and what method, what platform would be best for your data because in supernova remnants particularly, we’ve got 3D models of quite a few of them now based on observational data or computer simulations constrained by the observational data.

And what that has allowed to show us, in the case of Cassipia A, specifically that there are some asymmetries.

That’s a supernova remnant, yeah.

A beautiful supernova remnant that Chandra has looked at a lot.

Some asymmetries there.

And the, which you can’t see, the image itself looks like so perfectly spherical and lovely.

But when you break it down into the 3D model, there are some interesting asymmetries.

And it also helps show that stars like Cassipia A can turn themselves inside out when they explode, because right before a massive star like that explodes, the iron is kind of really built up at the core.

And when you look at the distribution of that in the supernova remnant, the iron now is actually quite far out towards the perimeter, much farther than you would expect.

And so researchers were able to figure out that that star turned itself inside out.

And the use of 3D modeling was really incredibly important, that type of result.

So yeah, having different ways of looking at your data can be a very powerful tool for curiosity and discovery.

Very cool.

Wow.

Great question, Colin.

All right.

This is William Warren.

He says, Hi, I’m William Warren from Abingdon, Maryland.

You’ve created immersive VR experiences of space data.

Do you think future astronauts could use this kind of visualization technology to prepare for deep space missions?

Which is cool because every sci-fi movie, when they talk about going to another galaxy, they like reach and throw something into the ceiling.

And then the whole galaxy opens up and then they actually take their hands and like spread it and they get zoom in.

Like we pinch on a computer screen.

Yes, that is to me, like the exciting feature.

Like I actually dream about whether it’s astronauts, researchers, non-experts, like being able to do that type of work, right?

Being able to go into an extended reality space of some sort and learn about things, train about things, whatever.

But astronauts are actually already doing that.

They actually use virtual reality and other kinds of extended reality technology applications in order to learn about spacecraft and like where they’re going to be docking, how they’re going to be docking, where things are located in their spacecraft.

Special kinds of like training modules have been done with VR.

It’s already a very useful tool because especially if you think about astronauts having to do a space walk and to do some kind of complicated procedure out in space, being able to walk through it in extended reality would be a very powerful tool to help kind of fire up some of those neurons differently because there’s tactile memory involved if they’re doing it in a very simulated environment.

There’s been a lot of military studies about that type of work with simulated environments, which is why I think that idea of bringing it further out into astrophysics research and communications and all that is such a great idea.

So yeah, it’s a great question.

So do you think they learned anything from Pokemon Go?

Oh.

Will they insert virtual reality creatures out there?

Yeah.

I honestly think Pokemon Go is a great tool.

That was such a brilliant XR application that people picked up on such a massive scale.

And I think having that kind of learning opportunity for different scientific and engineering activities would be fantastic.

I mean, I’m not saying aliens, but like, you know, different kind of experiences would be great.

It got people off the couch.

It did.

It did.

It did.

It got them walking around the neighborhood.

It clearly did something good because people were out.

And it created a whole community of people because people would meet up to find the characters and meet one another like, oh man, you’re a total nerd too.

My God, I thought I was alone.

I love that.

So great.

Yeah, super cool.

All right.

This is Alan Keiser who says, suppose you get one perfectly synchronized week on Chandra, James Webb, and an Event Horizon style array.

Yeah, OK.

OK.

What single measurement would you make to decisively test how black hole feedback regulates galaxy growth?

And what exact observable or statistic would you publish so the rest of us can tell if you’re right?

Alan from Santa Barbara.

And he says, PS.

I am another show off.

Yes.

Is that a question?

That’s amazing.

First of all, again, I feel like you might already know the answer to that question.

Yeah, I got a feeling Alan knows the answer to a lot of questions.

Alan’s sitting up in his classroom just like, these kids are stupid.

Let me ask Neil, Kim, something, these dumbasses, I’m teaching all day long.

Yeah, I feel like I’m trying to be stumped on Alan, which is totally fine.

First of all, I would have to say it has to be a large community collaborative thing.

There would be no magic wand to give me any of those superpowers with my super friends.

By the way, the listing of Chandra and Webb and EHT together like that is a very cool group, I think, to be a part of, so major props to that.

But all of these types of scientific observations with these massive telescopes is truly highly collaborative and all very peer reviewed.

So no one person is ever making that kind of decision.

I feel like I’m being a bit of a party pooper there.

But I would say with the type of object I would pick is probably Centaurus Cluster or a similar type of galaxy cluster with a nice active supermassive black hole at the center.

Chandra could map the black hole activity, Chandra could map the surrounding environment of the black hole, the X-ray cavities, kind of carving out into the gas by those black hole jets.

Webb could definitely provide the sort of map and history timeline of the stars’ evolution throughout that area as well.

That would give you some really great constraining information.

And then the Event Horizon Telescope, which is another amazing telescope and has already taken some incredible images, would hopefully be able to get us that jet launch point, I would say, from the black hole.

That would be really cool.

So being able to figure out, like, you know, what I love about Supermassive Black Holes is that they’re responsible for the care and feeding of the galaxy.

And I love that Alan’s question, I believe, is kind of getting at that point.

Like, how can we get even more data about it?

So that would be my suggestion.

And if I say something just about my people, Yeah.

my community of astrophysicists, unlike the example that Kim is describing, because that’s just you want to collaborate with people who would be on the various telescopes with a peer-reviewed project.

However, if something goes down in the sky that no one saw coming, and I discover it first, and I see it first, I can set out a notice that night for any telescope where they can peel off an hour of their observing program to get data, because each telescope is going to be different, a different focus, different bandwidth, different…

And if the object sets for me, it’s rising for somebody else in the world, right?

And so we, my community is very supportive when there’s a phenomenon that comes and goes, and you gotta get it in the spot.

Calling all telescopes.

Calling all telescopes.

Yes, Chandra does that all the time.

That’s exactly.

We have a code red.

That’s exactly how that had, how that would play out.

So that can happen for events that occur in the sky, but something so organized as the black hole in the middle of a galaxy trying to get the best data, like Kim said, that’s a peer-reviewed, pre-organized activity.

Cool.

So Kim, your Chandra deep field, is that a deep field unto itself, or did it do the same, quote, deep field that Hubble obtained?

So Chandra did its own deep field, the Chandra deep field south, but it also did coordinated campaigns with the goods deep field that both Hubble and Chandra have looked at, and there have been additional campaigns as well.

So deep fields are an area of really rich research for Chandra and Hubble and other telescopes, and you kind of can’t have enough of them.

Yeah.

You know how the first deep field was obtained?

Um, uh, no.

The head of the Hubble Institute.

And now I just remember it.

With his discretionary time, because every director gets a little bit of time, but he can do whatever the hell they want.

Right.

And he said, find me an emptiest area of the sky you can.

He was flexing hard.

That’s play.

Play and creativity at work.

Yes.

And he said, let’s burn some telescope time.

Looking at nothing.

Looking at nothing.

And then something was there, and he was the luckiest director ever.

No, it’s not.

Because if nothing was there, he would have been in trouble.

You know, he would not have been the director the next day.

Yes.

But it was the same for Chandra, like because Chandra has less time to kind of give, right?

It has to look at objects longer than Hubble has to.

It was still a risky proposition, but they looked at this one spot, the Chandra Deep Field South, which was seemingly empty, and they looked at it for like 40 days and 40 nights, and they found this massive population of thousands of black holes and galaxies with black holes at their cores.

So it’s lovely when you can be creative.

Let me ask you about this.

Whether there’s empirical evidence or just your opinion, is there anywhere we can point a lens and not see something in the universe?

With our current telescopes?

In your opinion.

Because I know we haven’t looked at every place.

So in your opinion, is there any place we can point a lens and not see something?

Up your ass.

I gotta go.

No!

I gotta go.

Cause we’re not gonna keep that in the show.

Oh, no.

And I gotta go.

Because if we don’t keep that in the show, then there’s no reason for me to exist.

I have no reason to exist.

Oh, wait.

If we don’t keep that in the show, I have no reason to exist.

Like, that’s, you can’t get better than that.

So I’ll lead off and then Kim will follow up on that.

So I think a better way to say that is, if you have a telescope that has opened a new window to the universe in whatever way, in time stamps, in wavelength, in how big it is, if it’s a telescope that did not previously exist and you put it anywhere, it’s going to make a discovery because it’s looking at the universe beyond the fence that was set up by everything else you’ve been using to look at the universe.

Now, but the universe is so vast.

Kim, I don’t think there’s a place where there’s nothing happening.

What do you think?

Oh, I agree.

I mean, like what Hubble did for the optical field, what Chandra has done for the X-ray field, what Webb has done for the infrared field so far.

Each time we launch these new telescopes, we’re finding those things deeper, earlier, back further in space and time.

It really is, I think, mostly at this point, a limit of our technological achievements.

I don’t know.

It’s a great question.

It’s a very exciting thing to think about.

Props to you, props to you.

Well, thank you, thank you.

I’m going to say that the original answer was better than my question.

And I think I don’t even, I think, fully pronounce the words in that comment.

Okay, good.

Up your leg.

Good, good.

So, Kim, I think we got to call it quits there, but you’ve got a book coming out.

Tell me the name of that book, How to Freak Out Your Kids.

What is it?

Sorry.

Yeah, no, I hope not.

My Space Will Freak You Out is coming out in February.

I’m excited.

It’s a hand-picked assortment of really freaky things in the universe.

And when you think about it, that’s a long overdue book, I think.

Yeah, it’s a lot of fun.

I think it’s fun, like Lemony Snicket for space.

Yeah, there you go.

Nice analogy there.

That’s great.

Yeah, the series of unfortunate events, is that what that was?

Yes, yes, I love that.

So, and that’s your ninth book, so congratulations on staying with it.

We’re in the same biz.

Not quite.

I try, not quite the same biz as you, but I try.

So Kim, this has been a delight.

Thank you, it has been so fun, as always.

I’m still waiting for my jacket.

Oh, she starts.

Yeah, and I said, Master’s Gold Jacket, but the Master’s Jacket is green.

Hall of Fame is gold, and that’s what you would get.

I think the gold sounds nice.

We’ll work on that, Kim.

All right, cool.

So good luck with this effort, and sometimes that helps too, I’m told, but you’re at it, and you’re at it strong, and we’d be delighted for this to have been your fifth appearance on StarTalk.

For anyone who wants to catch our prior episodes with her, just check our archives.

We have vibrant archives of past episodes.

You can search by name, by guest, by topic, and it’s all there for you, in case you’re a new joiner for who and what we are.

All right, Chuck, always good to have you.

Always a pleasure.

And Kim, we all love you.

Stay strong.

Thank you.

It’s so nice to be here.

This has been StarTalk Cosmic Queries, the Kim Arcand edition.

How do you like that?

I like that.

I like that.

As always, I bid you to keep looking up.

Unlock with Patreon

Unlock with Patreon

Become a Patron

Become a Patron