Do you dream of working for a government agency that blends reality, science fiction, and national defense to create next-generation technology and ideas that eventually shape the way we operate as a society? Then DARPA might be the place for you. On this episode of StarTalk Radio, Neil deGrasse Tyson, comic co-host Chuck Nice, former head of DARPA Arati Prabhakar, and journalist and author Sharon Weinberger shed some light on the mysterious Defense Advanced Research Projects Agency (DARPA). You’ll hear about the history of DARPA: how the agency got started in 1958 during the Cold War, some of the first wild ideas that were researched, and what advancements it helped shepherd throughout history. Not all of those wild ideas were ours: you’ll find out how DARPA was called to investigate the time the US Embassy in Moscow was being attacked by microwaves. Explore DARPA’s role in the development of stealth technology, from the first functional stealth fighter jet to the B-2 bomber. You’ll learn about ARPANET, which included the very early underpinnings of the Internet that pervades our lives today. Discover more about the Boeing X-37 orbital spaceplane and our spy satellites, and why space remains a complicated domain. And if that’s not enough to blow your mind, roboticist Hod Lipson joins the crew to discuss the merger of artificial intelligence and national defense. You’ll learn about self-driving cars and how DARPA would embrace them. Chuck takes to the streets of NYC to ask citizens how they feel about the rise of self-driving cars. We also answer fan-submitted Cosmic Queries about the future of artificial intelligence. Lastly, we investigate the dark side of defense technology and how neuroscience could play a factor in the future of national defense.

Transcript

DOWNLOAD SRT

Welcome to StarTalk, your place in the universe where science and pop culture collide. StarTalk begins right now. Tonight, we will explore the intersection of science fiction and national defense inside the high-tech government agency developing America's top secret weapons...

Welcome to StarTalk, your place in the universe where science and pop culture collide.

StarTalk begins right now.

Tonight, we will explore the intersection of science fiction and national defense inside the high-tech government agency developing America's top secret weapons of the future.

So let's do this.

Whoa.

I got with me, of course, Chuck Nice.

Feeling good?

Always, always.

Got your Let's Make America Smart Again shirt.

This is my Let's Make America Smart Again shirt, which quite frankly is a very cool shirt.

And we got with us tonight, Sharon Weinberger.

Hello, Sharon.

So you're the author of this fat...

Do people still write and read fat books?

They write them.

I hope they read them.

So this is The Imagineers of War, the untold story of Darpa, the Pentagon agency that changed the world.

So Darpa stands for what?

What is Darpa?

Darpa stands for the Defense Advanced Research Projects Agency.

And so you're a journalist.

You follow this industry for a long time?

Yeah, I've been writing about Pentagon-funded science and technology for about the past 17 years.

Oh, okay.

This book is the product of about five years of interviews, research and writing.

Well, you must do it well because you're still alive.

I tried.

Just saying, just saying.

No, she was undercover until this moment.

Ready to go public.

So tonight we're featuring my interview with the former head of Darpa, Arati Prabhakar.

And we met during her tenure as chief of Darpa in Washington, DC.

So let's check it out.

Growing up, to me, Darpa was always this mysterious, you know, what are they doing there?

I don't know.

But it's really cool.

One day we'll find out, maybe.

Is that the mission statement?

Did I just capture that?

Well, as long as it moves the needle, as long as it really changes the world, and especially national security, then yeah, that's what we do.

Darpa is an agency that is very, very small.

We're 200 government employees, right?

And all of us are here in this office building in Arlington, Virginia.

All of us sitting on the offices right above my head.

Right above our heads.

We don't have labs.

We don't have any fixed infrastructure.

We are critically dependent on all the places and people that do.

OK, so I looked up your budget.

It's about $3 billion.

It's about $3 billion.

About $3 billion a year.

And you just give away all that money?

No.

No.

By the way, give and money just don't make sense together in a sentence, just to be clear.

What we do is we bring in program managers, really smart people in their fields.

They come in for typically about three to five years.

A tour of duty, kind of.

A tour of duty.

They are here for a short time.

What they come in to do is to craft a program.

It might be, can I make a ship that can sail without any sailors on board?

It might be something about a next generation of artificial intelligence.

It might be about biological technologies, whatever area.

They want to know what are all the really creative, what are the crazy sort of mind-bending ideas?

And they listen to all of that.

They go out and they'll talk to the user community and the DOD, for example, and especially.

But between thinking about where the big problems are and where the science and technology could take you, that listening is a key part of how they come up with that vision.

So we are very interested in people's big new ideas.

So I got my list here.

Okay, I want a wormhole.

Okay.

Big national security implications for that one.

I want a transporter.

Yeah.

Don't we all?

I want the flying car.

So when are we going to get these things?

A few more years.

A few more.

Yes.

There was a DARPA project called the 100 Year Starship that looked at wormhole travel, so...

Really?

A hundred years, maybe.

That's optimistic.

That's certainly not a waste of money.

And I like the way you put it, a hundred years, so none of us will be here to bitch about it.

So, okay, you also had robotic soldiers in there, and like force fields and mind control, crazy stuff.

These things...

That could be right.

They could, and the ideas come back again every few decades, the idea of a force field that can protect the planet, the idea of mind control, all of these things that...

This is part of the reputation.

Yes.

The spooky, mysterious reputation that Darpa has sustained over all these decades.

Yeah, spooky.

A lot of its work has been secret, has been classified.

It's also at times sort of touted itself or touted by others as a science fiction agency, some of which is overblown, but some of which is true.

So you're men in black, basically.

That's what you are.

So what are the origins of Darpa?

Darpa dates back to 1958, and basically in the fall of 1957, the Soviet— That was an important year.

I mean, NASA got funded.

That was the geophysical year.

A lot of science tech stuff was coming together.

It was coming together, and a lot of it was prompted by the October 1957 launch of Sputnik.

This is the Soviet Union's launch of the first artificial satellite.

And it's hard to imagine now, but it was sort of a 9-11 moment.

It was this—created this real political panic in the country that we were losing the space race.

The Soviet Union would have intercontinental ballistic missiles that would reach the United States.

Okay, so we said, all right, let's fund this agency with what's the mission statement?

To do whatever the Secretary of Defense directs it to do.

It was very vague, except it was supposed to take on space programs.

It was before NASA.

It was the nation's first space agency.

And that's not a scary mission statement at all.

Not at all.

Hey, whatever the Defense Secretary says do, we should do.

All right, so it's also, some have considered Darpa the place where mad scientists go and are given free rein.

So what is some of the craziest, maddest things to come out of there?

So one of the original mad scientists of Darpa was a scientist, a Greek scientist named Nick Christophilis, who had these like fantastic ideas.

So he would be an immigrant.

He was.

Yes.

Exactly.

And he was genius.

And scientists loved him.

Darpa loved him.

So one of his first ideas was a force field.

You know, we were worried about Soviet missiles attacking the United States.

So he said, what if you launched a bunch of nuclear weapons in the upper atmosphere that would release killer electrons and fry anything coming through it?

It would be a planetary force field.

That was one idea.

Oh, that's great.

Yes.

Let's nuke the sky.

To create a force field.

To create a force field.

What could go wrong?

Yes.

We had another fascinating idea of creating a particle beam weapon.

Same thing, to take down Soviet missiles.

You would just need to drain the Great Lakes to power it.

All at once.

By...

Who needs them?

You would put nuclear weapons under the Great Lakes to drain them.

To power the particle beam.

So these are out of the box ideas.

They were very out of the box.

You know, I'm starting to believe that Darpa might stand for drugs are really pretty awesome.

And another in my list here, how about Project Pandora?

What is that?

Project Pandora was a top secret project.

We should be cautious of anything called Project Pandora.

Yeah, they chose that name appropriately.

So in the 1960s, the CIA discovered that the US.

Embassy in Moscow was being irradiated.

They were sending low-level pulsed microwaves.

And this was the time when there was...

Who was irradiating who?

The Soviets, the Russians, irradiating us, the Americans, in our embassy in Moscow.

Yes, with microwaves.

And this was a time a lot of literature was coming out that maybe pulsed microwaves could alter human behavior, affect the mind.

So the CIA said, oh my God, the Soviets are trying to control our diplomats' minds.

Because we didn't know better.

We didn't know better.

When really they were just slow cooking us.

Yeah, so they assigned DARPA to test this.

You know, could microwaves be a mind control weapon?

Right.

So those things didn't quite pan out as people had perhaps.

We lack psychic super weapons, yes.

But other programs have panned out famously and are responsible for some of our most sophisticated military innovations.

So I asked DARPA head about the intersection of technology and the military and what role that plays.

So let's check it out.

There are two sides of DARPA, because again, our job is for national security.

So one facet of it is these core underlying technologies like networking that you have to have if you're going to build sophisticated military capability.

And the other is military systems that we demonstrate, like this thing called stealth aircraft, which also started as a DARPA project, and the whole idea of precision strike, where instead of using massive weapons that wipe out everything, you develop the very sophisticated ability to find a very specific target, communicate back, and deliver a missile to precisely that location.

Rendering nuclear weapons completely absurd in the face of that ability.

Everything up to and including nuclear weapons was about greater mass and greater destructive power.

Precision said we want to hit exactly what we want to hit, and we can be—really, it was driven out of the idea that—again, back to the Soviets.

The Soviets we knew had more forces and they had tactical nuclear weapons, and we said there has to be a different way to fight that kind of fight.

So stealth and precision weaponry, somebody has to think that up and decide that that's a thing to do.

So what prompted DARPA to go there?

Well, ironically, it grew out of our most failed war effort, which was Vietnam.

DARPA had been very heavily involved in Vietnam in developing things like a quiet aircraft for Vietnam for counterinsurgency, precision weapons for bombing targets.

And when the Vietnam War ended, the Soviets had been modernizing.

We hadn't, and DARPA figured out a way to sort of repackage it for the European battlefield.

So Sharon, we have an image of the first practical stealth aircraft developed by DARPA back in the 70s.

Let's check it out.

Yeah.

So the whole concept is whatever radio signal goes forward and hits it, it should deflect somewhere but not back from where the signal came.

And that way, it's as though nothing is there, because you only know something is there not by the signal you send out, but by the strength of the signal that comes back.

And if you angle all the sides in such a way that it disperses the signal, you can make this thing look as small as a bumblebee on a radar return image.

You just explained why my children do not listen to me.

Just deflect them everywhere.

But you know what happened?

So that was with the 70s?

When was the first stuff?

That was in the 1970s.

The 1970s.

So our computing power was pretty low then relative to later on.

And so to get the perfect shape that doesn't reflect anything back forward required formula fitting and calculating that the computers of the day were incapable of solving.

And so as computers got more powerful, you could take these flat surfaces, they create a perfectly curved surface where every spot knows exactly how to reflect it so that nothing comes back this way.

And that gets you the B2 bomber right here.

Aha!

I see what you're saying.

This is still to me the bat plane.

I love it.

Right.

So each of those surfaces, there's no surface that will reflect a radio signal back to where you're coming from.

So not all DARPA projects become weapons.

Even if they wanted to be conceived as such or any, not all become weapons.

Some become other stuff, right?

This is a fascinating feature of this.

And of course, DARPA research laid the foundations of some of the most valuable non-military technology that influences civilization today.

And I asked the former head of DARPA all about this.

Let's get me a rundown of the greatest hits of non-military successes.

Let's check it out.

We're in the late 60s.

Think about what computers were like, right?

I mean, these huge mainframes and punch cards and interacting with us.

We've been there, right?

Don't get me started.

But even in that time, people were imagining what might be possible if you could connect computers together and they could share information and programs and tools to do our work.

I saw that movie.

It's called Terminator.

Well, in the 60s, think about vacuum tube computers and going to punch out your cards.

Imagine being able to think about that.

That was really the spark.

In the late 60s, ARPA at that time began a program called the ARPANET.

And the challenge that they threw out to the community was let's connect some computers together, see if we can actually get them to communicate so that you could start sharing information.

And how to get them to know what the protocols are for the communication.

And so the Internet Protocols, TCPIP, which is how this massive scaled up Internet still works today, those were invented by someone who was a program manager at DARPA.

Those were the baby steps and look how far that baby has gone.

So let's move into the 80s and 90s.

I came to DARPA as a young program manager 30 years ago in 1986.

You've got genetic connections here.

Yeah, I got to start my career here pretty much in that.

That was totally awesome.

So some of the things that we did in that period.

So if you think about what's inside your smartphone, think about those.

Yeah, well, that's good.

So those are possible because you have very sophisticated integrated circuits and some of the early roots of that trace back to DARPA.

When your cell phone talks to the cell phone tower, it's using a chip made out of gallium arsenide.

It's a radio semiconductor component.

That comes directly out of major DARPA programs that we started because DoD needs radio communications and radar systems.

Siri traces back to a DARPA project that got spun out of SRI as a company called Siri that got acquired by Apple and became the product.

So all of those technologies, but this is the magic, right, is public dollars sparked all of those technologies that, you know, changed how we live and work.

So Sharon, she's taking credit for the internet, smartphones and Siri.

Yes.

Is that, is she all that?

Almost all of that.

So the biggest one in what cemented DARPA's reputation was network computers, the ARPANET, which was fully and wholly a DARPA innovation which went directly into the internet.

Oh, thank God they changed the name from ARPANET because that sounds like the internet for really old people.

ARP?

She's absolutely right that Siri also came directly out of a DARPA program.

It turned out the military wasn't interested, so they spun it off.

Apple bought it.

It's in your iPhone.

So Sharon, what are the actual origins of ARPANET within DARPA?

Because I read that it had something to do with linking together the few survivors after nuclear holocaust.

So is that true?

It's mostly untrue.

Mostly?

Well, no.

This is for Armageddon, which is kind of mostly untrue.

Well, the other myth was that ARPANET had nothing to do with nuclear weapons.

It was just scientists that wanted computers to talk to each other.

And that's a little bit of a myth, too.

So what it was was the Pentagon was worried about command and control of nuclear weapons.

So they asked DARPA, can you look at this issue, command and control of nuclear weapons?

DARPA hired a scientist who didn't care about nuclear weapons.

He wanted computers to talk to each other.

So it was both coming together.

Well, coming up next, we're going to explore how DARPA stepped into my world, my world of space.

And we're going to see how they had a project to try to protect America's national security in space when StarTalk returns.

StarTalk, from the American Museum of Natural History, right here in New York City.

We're featuring my interview with the former head of DARPA, America's Advanced Defense Technology Agency.

Let's check it out.

What's this about, a space surveillance telescope?

Well, the Defense Department cares about space.

Which oven is that in?

A lot.

That you're baking, okay.

That's one that's pretty baked.

We just handed it off to the Air Force.

Not half-baked, it's fully baked.

That one's, well, for DARPA, it's fully baked.

You have to ask the Air Force.

But I think it's making a real difference.

The DOD issue for space is the following.

We can do nothing without space.

We need it to communicate.

We need it to navigate with GPS.

We need it to be able to see what's going on around the world.

It's essential to us.

But space is a very complicated domain.

It's not like the 50s and 60s and 70s where we were the only ones doing anything.

Only players, yeah.

So these are sensitive telescopes on Earth looking up that can monitor all the activity at 23,000 miles up, geosynchronous.

And there's a lot of commercial activity, which is wonderful.

Their other nations are getting active, unfortunately not always in a constructive way.

So we're in a time when instead of orbital catalog maintenance where you just have a catalog and you know what's on orbit and you sort of pay attention once in a while, now in real time you have to be able to see and know what's happening on orbit.

And the vastness of that job is hard to imagine, right?

I mean, think about several hundred thousand times the volume of all the Earth's oceans.

That's what you have to be able to look at and see what's going on.

So Sharon, how many satellites are up there, spy satellites?

Oh, so that's classified.

I think there have been 276 missions total, which include spy satellites, some which are no longer active.

I think that we know that there are about maybe half a dozen spy satellite constellations, but the exact number is classified.

All right, so, and how about other things that are space-based, like the X-37 plane?

Tell us about that.

Yes, so it looks...

Tell us what you can about that.

So it is a very classified project.

It's the X-37B orbital test vehicle, and it looks a lot like the shuttle, you know, the manned shuttle, like a mini-shuttle, a mini-me of the shuttle.

And it has flown in space for up to 700 days, and the Air Force and the military has never acknowledged exactly what it's being used for.

Somehow...

Wait, just to be clear, just to be clear.

And a satellite, once it's in orbit, that's the orbit it has forever until you put it somewhere else with whatever fuel you have.

Whereas a space plane...

A space plane can maneuver.

You could put it over different spots of the Earth on command.

Up next, I ask the former head of Darpa about the future of robotics and AI when StarTalk returns.

We're talking about America's advanced defense technology programs, and joining us to help us in this conversation is AI and robotics expert Hod Lipson.

Hod, welcome.

So you're a professor of mechanical engineering, and you make machines that create.

Is that a fair characterization?

That's right, machines that are creative.

Trying to make, break that myth that machines can't actually be created.

So these are self-aware robots.

To some extent, yeah.

That scares the hell out of me, okay.

Well, as you know, I sat down with former head of America's Advanced Defense Technology Agency, Darpa, and I asked her where we're headed with the future of robotics and AI.

So we'll get her take on it, we'll come back to you.

Let's check it out.

I think for a lot of people, the word robot conjures up a humanoid robot.

I think that's a little bit different thing.

I try to disavow people of that, because human body, why?

There's nothing, why?

Right, we can do that stuff.

Yeah, we're not some model of anything, right?

Just do better, do a better job.

And different, because when machines do things that humans can't do, that's when we can do things together that are really amazing.

Rather than do just what a human can do.

But artificial intelligence, as you know very well, I think people are very aware now, it's having this resurgence in this new wave of artificial intelligence with machine learning at its core.

Now this idea that machines can take, you know, look at a million images and start figuring out how to label them and do it better than humans because of how they've been trained.

Once they beat us at chess and then at Jeopardy, you know, we should just give up.

Right, and go.

Well, we might give up on those things.

There are a lot of things that they are now we're close to.

So we're good.

Really, because you have to beat us in Jeopardy, I said, there's nothing left.

We're done.

Let's just move to Mars.

And I'm looking at my photo sorter on my computer and you give it face recognition, some hints and give it a few photos to train on.

One of these photos found me when I was 11.

Isn't that amazing?

When I was 11.

I bet I could picture the 11-year-old you.

I kind of resembled myself, of course, but still.

It says, we think this is you.

Please confirm.

It's like, yeah, it's me.

It's a fuzzy photo taken on a Kodak Instamatic.

Oh, my gosh.

So that's what this learning era of AI is really all about.

It's in everything that we are doing.

So Hod, you're an AI roboticist.

What is that?

Well, robotics is this merger of AI and the physical world, the mechanics, the embodiment of a machine.

And so you need to sort of understand both the mechanics and the AI to make a physical, smart system.

So what do you create in your lab?

I love asking that of anybody who's got a lab.

What do you create?

What's happening in your lab?

What's happening?

So we're trying to look at sort of next generation robotics.

We're trying to look at how can we take this from program machines to machines that can learn, that can learn fast, that can learn things that we haven't taught them, and do things that we normally don't think robots can do, like self-replicate, be creative, things like that.

They have self-awareness?

Self-awareness is one of these holy grails, I would say, of robotics.

It's going to take a while, but we're on that path.

There's a robot on this.

There's some creature on this table.

Yes.

It's a little creepy.

It is.

So what is this?

So this is one of our platforms, one of our robots where we study self-awareness.

We develop our algorithms for self-awareness.

We put them on this robot, and we see how well they do.

So you upload.

We upload it, and we see, can this robot become self-aware in a very primitive sense, but can it understand what it is?

What it is in itself.

In itself.

How exactly could it understand what it is?

Because what is it?

We don't know what it is.

Well, you know, so it starts off, but if you press the button, you can see how it works.

Press the green button.

Press the green button.

Green is good, right?

If it's green.

That's a good button.

Yes, it is, Morpheus.

Press the green button or the red button.

Just press this.

You told me to press it.

Go ahead.

All right, so it's going to take a while.

It's making a noise right now.

It's going to take a while, but what it does is that it begins to sort of, will soon start moving and having actuations and sensations, it's going to have all kinds of sensations about itself and it's going to start forming what we call a self-image.

It's going to, in a very primitive way, begin to learn that it has full length.

This robot is actually blind.

It has no ability to look at...

All righty.

It has...

So what's happening?

So what's happening is it's moving around and it's sensing all kinds of things about itself.

About how it moves, how it accelerates, one of the torques in its motors, one of the currents...

Can it sense that it's on a table and a few feet off the ground?

It doesn't know that.

No, it just...

But if one of the tips goes over the edge, will it pull it back off the edge?

No, it doesn't know that anything is like a baby starting to learn what its body is.

And it takes it about four days.

It looks like it's dancing now.

I was about to say, I'm not a roboticist, but it looks horny.

It's throbbing.

I'm pretty sure it's aware of something right now.

So what happens four days from now?

For four days from now, if you look inside...

It pulls out the...

It takes over the world.

Where's the off button?

Oh, you just killed it.

Don't go to sleep.

It's just creeping me out on the table here.

That's only because it's coming to get you later.

Sorry.

So you want to have...

For some applications, you want robots that look like humans so that they can interact with humans or so that they can use human tools, the tools designed for humans.

Why would you do that?

For example, I can imagine 40 years ago, we would have thought, how would we have a self-driving car?

You wouldn't.

You would have a robot that drove in the car the way a human would drive the car.

No one is thinking, let's have the car drive its own damn self.

Right.

Actually, when you look at a lot of science fiction movies, they still have a robot driving the car.

Exactly.

Exactly.

So clearly, we can just bypass the middleman.

Absolutely.

We can.

Right.

So to say, design a robot to use tools the way a human does, isn't that, once again, not bypassing the middleman?

Right.

Aren't we the weak link?

Sometimes, we want a robot to communicate with a human, a human really needs to communicate with something that looks like another human.

So Sharon, at DARPA, have they been thinking about humanoid robots?

Is that a thing?

Yes.

I mean, they've been thinking about artificial intelligence going back to the 1980s.

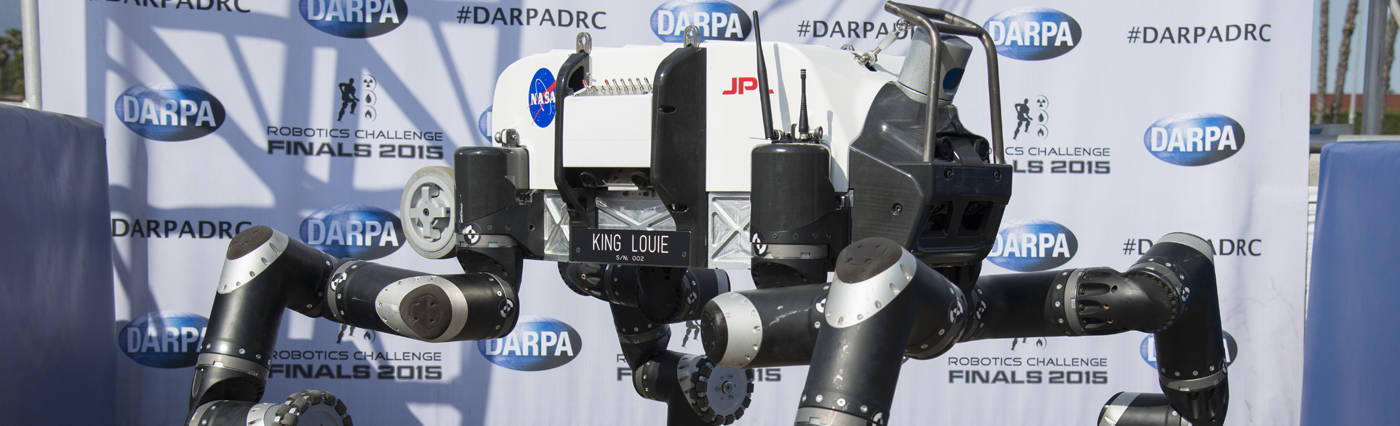

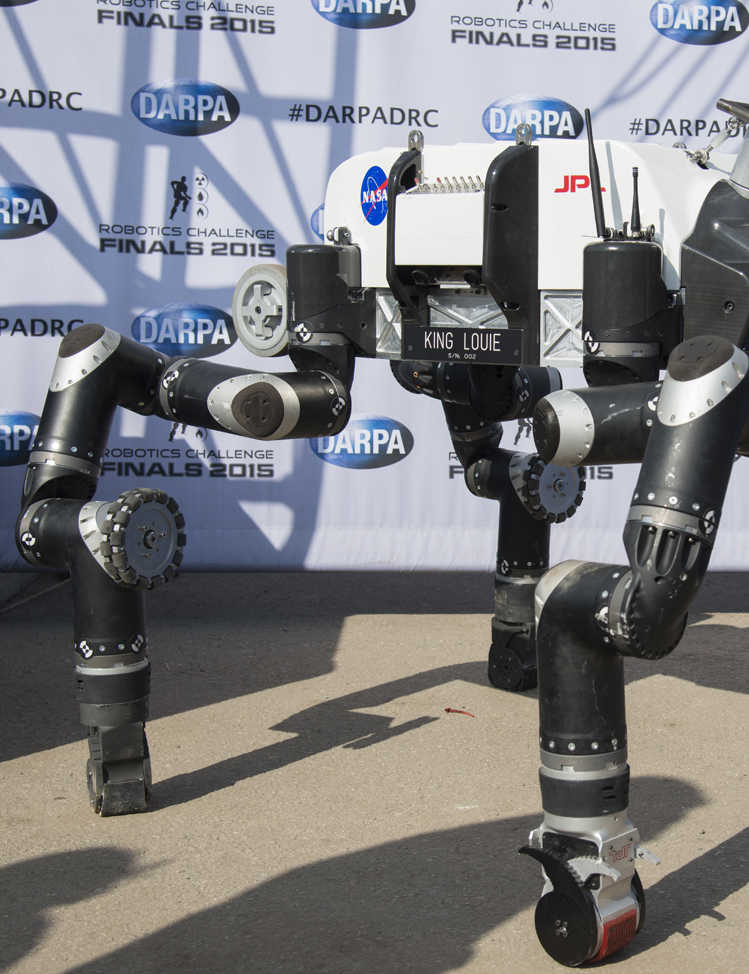

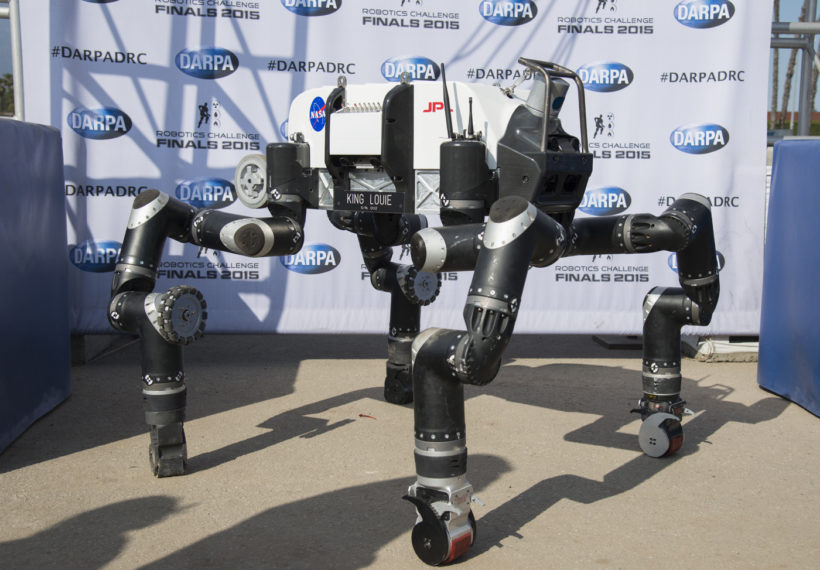

And then a couple years ago, they did this contest of sort of humanoid rescue robots to make them seem a little bit friendlier.

Was this the robotics challenge?

Yes, exactly.

So we've got a demo clip of one of the teams doing some testing with one of their sort of humanoid robots.

Let's see if we can bring that up.

So Hod, if I were that robot, I would be pissed off.

I would come around and be ready to kick this guy's ass.

You see, it's not self-aware yet.

Oh, if you keep it from being self-aware...

It's just a machine.

Now, this is in Boston.

That's why I used a hockey stick to poke him.

Yeah, exactly.

That's all I know, there's hockey in Boston.

And where can I get one of these kick-em robots?

Because, you know, I got a lot of pent-up frustration.

The ones that are not self-aware.

Yeah, I don't want a self-aware one.

You know that come around.

Wait, wait, wait.

So why are they abusing this robot?

Because we were feeling for the robot.

Even though it's not human, we were kind of like, that's not nice.

Even though that's a complete machine.

You know, one of the things we did with our robots is chop off its leg and we watch what happened.

And we could see that after a while, it sort of learns that it has no legs and it begins to limp.

What kind of testing is that?

We test how well the robot can sort of understand what's happening, can remodel itself.

So, Darpa, the military might deploy this in a kind of disaster response situation, right?

Might you equip that with a gun?

Although, it's hands-worthy, these rubber pens.

Presumably, if it could...

You see how the panache with which it stood back up?

Yes.

So, it's like, I'm ready for you.

So, you know, we all remember the movie Robocop.

The military isn't really a rescue machine.

I mean, yeah, I think that eventually Robo-soldiers is the eventual idea.

Right.

No, it could go into a dangerous situation and bring out fellow soldiers, for example.

It could, yes.

Well, that's cool.

Right.

It brings some guns, a little spin, you know, put it in its holster, that kind of thing.

But again, this would be guns that a human would use, but why train a robot to use a human gun when you can just build a gun into the chassis itself, right?

Absolutely.

See, he's lab.

I don't know what he's got in his lab.

I'll tell you what he's got in his lab.

A bunch of one-legged robots who are pissed the hell off.

They're just waiting around.

I can't wait till I gain consciousness.

They got like a plug in the internet ready for some upload, some firmware upgrade where they go come out.

So the robot's standing up again.

And it reminded me of the scenes in The Terminator where you think the Terminator's dead, but then it just keeps coming back up.

Yeah.

So that's what that's all I could think of in that.

Yeah, this this all makes sense now because when you talk about the rescue, you talk about artificial intelligence, it really seems like video games are nothing more than a training mechanism for the soldiers of tomorrow.

Oh, okay.

Yeah, well, that brings us to a part of our show called exiting quickly as possible to a part of the show called Cosmic Queries.

Yeah.

We have fans that have their own questions about robotics and AI.

Chuck, what's the first question?

Here we are from Wesley Green coming from Facebook.

Will AI-operated spacecraft be how we explore the outer reaches of the cosmos?

I think that's the only way to do it.

We're not going to send people there.

Wait, wait, wait.

We're not going to send people because people want to come back.

But suppose you build AI so that it has self-awareness and it wants to come back.

Well, it won't want to come back to him because it's going to get his legs cut off.

No, no.

I mean, this is a moral, ethical frontier.

If you put enough AI into a robot that it becomes as inquisitive as a human and therefore you send them instead of humans, what moral obligation do we have that we just created a consciousness?

That's a great question.

But before that happens, there is this value in setting a machine that's not quite as intelligent as a human that it wants to come back and yet it can, let's say, detect life forms in ways that machines can't today.

And that's valuable.

We withhold from it a certain level of intelligence so we don't have feelings for it or vice versa.

That's right.

Next question.

Cool.

There.

Next question.

All right.

This is Sir Maxfield from Instagram who wants to know this.

What are your thoughts on human slash robot sexual relations?

Ooh.

Hod?

Well, that's a...

First of all, Hod, what's going on in the lab?

It's definitely going to be a big piece of the market for humanoid robots.

Absolutely.

No doubt about it.

Yes, sir.

Next question.

This is Daniel Fridland from Twitter who wants to know this.

Will AI robots eventually steal astrophysicists' and comedians' jobs?

What?

Will they?

Will they be able to...

Absolutely yes.

Fussy's busting the legs of robots.

Now he's going to make a replacement robot for you and me?

I know, man.

I'm telling you right now, we should pull his legs off.

Before it's too late.

All right, coming up next on StarTalk, we're going to discuss the present, the origin and the future of self-driving cars when we return.

We're back on StarTalk from the American Museum of Natural History.

And we're talking about future technology with the former head of DARPA, America's Defense Research Agency.

We discuss why tech competitions have been so successful at sparking innovation.

So let's check it out.

You can put up prize money and then have competing communities invest their own money just to get the prize money.

And then the total amount of money invested is 10 times the value of the prize money.

Am I correct?

The first competition we did, that was a self-driving vehicle challenge.

Do you remember those?

Oh, yeah.

And here's what's cool, is the first time we asked people, we said, put together a vehicle, no driver that can complete a course.

You don't get to see the course in advance.

Zero cars finished the course.

And then a year later when we did the competition again, a few cars actually completed the course.

And that's how fast we're able to accelerate the drive, that technology, that autonomous vehicle technology.

And by the way, if you want to find the people who won in those various competitions, all the competitors, you can find, they are the people that are driving self-driving cars out in Google and Toyota and Ford.

Because it could ultimately save 30,000 lives a year.

That would be a big deal.

So, Hod, you wrote a book, Driverless, Intelligent Cars and the Road Ahead.

Did you actually compete in that challenge?

So I was...

I heard rumors to this.

So we had a team at Cornell University at the time that competed.

It was one of the teams that did not win.

Okay.

But it was a fascinating experience in how machine learning is really the way to move these things forward.

So a lot of the teams that didn't make it in the first round were using sort of old-fashioned AI.

But in the second round, it's really the machine learning that made the...

Tell me again what machine learning is.

Am I just dense or what?

So in the field of artificial intelligence, there's been these two sort of schools of thought of how to build it.

One was you design a clever algorithm with rules and logic.

And the second one is just you teach it.

You show the machine example, and it figures out statistically what's right and what's wrong.

And you can teach a computer to do anything using these statistical methods.

It can glean knowledge of its experience that you as a programmer had no clue it would learn.

That's right.

Because it's learning on the fly.

That's right.

And there are things that you can program explicitly, like playing chess, like doing taxes.

Those are rules.

There's rules.

But to drive a car, distinguishing what's drivable, what's not drivable, nobody can write rules for that.

It's too abstract.

So, Sharon, why would DARPA engage in self-driving cars?

What's the motivation?

Well, the motivation was going back to its work in artificial intelligence back to the 1980s.

Around the time that this first driverless car race was started, there were convoys in Afghanistan and Iraq being hit by improvised explosive devices.

If you take the people out, you reduce deaths.

So that's one of these cases where it has a direct and very purposeful military application, but the value to the rest of the world is incalculable.

Yeah, and we still haven't gotten there for the military.

I think the civilian world has benefited much more from this so far.

So Chuck, you're our senior sidewalk science correspondent.

You knew this, that was your title we gave you.

Yes.

Yeah, okay.

So you have a dispatch for today?

Yeah, as a matter of fact, I went out to Washington Square Park here in New York City to find out what...

That's like your spot.

I love it.

Washington Square, okay.

And I just wanted to find out what people thought about driverless cars in the city where most people don't drive.

Oh, okay.

How do you feel about the idea of driverless cars?

Why not?

Why not?

Famous last words, why not?

Would you drive or ride in a driverless car?

No.

No?

Don't trust them?

Don't trust them.

Don't trust them at all?

Driving, you're in the back of a driverless car.

It has a fender bender with another driverless car.

What do you do?

I start cussing.

Run.

So now you guys have student debt, because you just graduated.

Would you throw yourself in front of a driverless car to get that sweet guaranteed settlement money?

Yes.

Boom.

Spoke it like a true musician.

Thank you, Chuck.

Well, next, we will explore the dark side of defense technology when StarTalk returns.

Welcome back to StarTalk, the American Museum of Natural History, right here in New York.

We're talking about future technology with the former head of America's Defense Research Agency.

I asked what they got cooking in the emergent field of neurotechnology.

Let's check it out.

This little bit of the human body, the human brain that we barely understand today, it's finally giving up its secrets.

We're just starting now to be able, for example, to get the precise neural signals from specific regions of the brain to translate them in real time, to figure out what they're trying to tell the rest of your body to do.

And we're starting now to take that basic science and turn it into technological capability.

And for us, that journey started with a program called Revolutionizing Prosthetics.

We knew we had to have a better standard of care for our wounded warriors than the really simple hook that was the upper limb prosthetic we've had for ever and ever now.

So the Luke Project is related to this, yeah?

And our Luke Project, one part of the...

Luke.

Isn't that the perfect name for this, for an advanced prosthetic hand?

So that we just delivered to Walter Reed, the first production unit, spent through FDA trials.

Yep, to give to our first wounded warriors.

Those are arms that will give them, it's the weight and now approaching the dexterity of your natural arm, big advance.

The other branch of that project was to understand motor signaling, signaling from your motor cortex and then the signals that go into your sensory cortex that give you, you know, not just motor control but then feedback from sensation.

Which I think is how, in principle, we expected that to have happened in Star Wars itself.

There's a full back and forth communication.

Yeah, remember when his hand gets repaired, when Luke's hand gets repaired.

Yeah, and then sort of open up the hatch.

Yeah, exactly.

That's what we're dreaming about.

So, Hod, how does this technology work?

This brain-machine interface, what's going on there?

Well, there's a lot of it has to do with sort of detecting signals to electrodes, interpreting those signals, understanding what signals correspond to what actions and then translating those actions into pre-programmed hand gestures.

So, at DARPA, you're developing this, is it for primarily wounded warriors or is it there's some other nefarious?

That's been the current iteration of the program.

The very public statements have been neuroprosthetics.

But when the work sort of was jump-started back in the early 2000s, the DARPA director talked about, you know, controlling drones or robots with the human mind.

So, this has gone through some evolution.

So, what is the difference between controlling a drone or a robot with the human mind and just having some interface with a joystick?

Isn't that the same thing?

My mind is controlling a joystick that's controlling the drone.

But if you could have sort of finer controls, I mean, a joystick, you go left, right, up, center.

I mean, what you do, you know, the way you move your hand or your foot has much more complexity to it.

Like the movie Avatar, when the guy controls the dragon and they become one.

Because he had a USB ponytail that he connected to the...

So, then what is the goal, then, the DARPA goal?

Ultimately?

DARPA is a research agency.

It depends what the military's goal is.

The military will use it for whatever purpose it needs.

Right now, it needs to help wounded warriors.

But it may also have, will also have, weapons applications in the future.

The military fights wars.

Well, I asked the former head of DARPA, Arati Prabhakar, what's next in their plans to merge human minds and machines?

Just to see if they had something up their sleeve.

Let's check it out.

So, in 10 years, there will just be a brain in the middle of this building.

And then the rest of the world around it, right?

Everything will be plugged in.

DARPA takes control.

Oh, I don't know about that, but I'll tell you.

DARPA achieves consciousness.

DARPA net.

I know what you're cooking up behind.

What's behind that curtain over there?

So, this is the point, right, about every one of our powerful technologies is that all of us who are scientists and engineers, we're pursuing these things because we think they have the potential to elevate humanity, and we know that every one of those powerful technologies is going to have a dark side as well.

And so, those are the issues that I think society has to wrestle with those, but those of us who are in this business.

As we always have.

Absolutely.

Sharon, give me some dark side here that she's talking about.

Is there some of that in your book?

There's some of that in my book.

The Imagineers of War, yes, okay.

One serious dark side is I asked the Darpa official in charge of those neuroscience programs.

Because you interviewed multiple people for the background of this.

Many people.

Okay.

And I asked this official, would you ever classify work in the neuroscience, make it secret?

And I expected him to say, oh, absolutely not.

And he said, well, if we found something in our research that gave the military a strategic advantage, then we would.

So, imagine that classified neuroscience work of what that means and the implications of it.

Is that what she means when she says dark side?

Because I'm thinking she means like Darth Vader dark side kind of thing.

I think classified military neuroscience research is pretty dark.

Well, dark as in no one can see it because it's done behind, done secretly.

Well, dark because it's the very essence of what makes it human.

So, the idea of doing anything classified human testing brain related is a little science fiction-y dark.

I'm just thinking when I hear her say dark side, I'm thinking you could take this discovery and somehow disrupt the world.

Yeah, I think that's also what she means.

That's the idea.

That just slipped out of her.

Oh, yeah.

That's also what she means.

Well, there have been these sort of like war gaming studies over the years in the Pentagon that suppose someone else got ahead in brain science and neuroscience and created some sort of weapons.

We need to have a strategic, you know, sort of, you know, you could have a missile gap of neuroscience.

So, Hod, do you ever worry or wonder whether the robots you create could be used for evil, if not by yourself, by others?

I do.

And we do it nevertheless.

Because that's a great answer.

Because the benefits outweigh the risks in such, you know, overwhelming fashion.

Just imagine, just recently you saw these new AI systems that can detect disease better than the best team of doctors in skin cancer.

I mean, just imagine the future where people have access to that, where people have access to education at a personal level.

So, yes, there are bad things that can happen, but the benefits are just there to be had.

Parting thoughts, Sharon.

I think humans will outlive artificial intelligence for a while.

For a while.

For a while?

So encouraging.

All right.

If I may offer some final reflections, I am a scientist and I value pure research into anything at all, if it is a frontier between what is known and unknown in the natural world.

So to prejudge what will be used for good or for evil, that is really up to society.

And how wise are we as a society to even receive such discoveries?

So I think alongside such research, there should be maybe a tandem agency that is thinking about the morality, the dangers, the abuse that could unfold, so that we can sort of march together into this future.

And by the way, Darpa, I don't know why you guys don't have a much higher budget than you do.

Or maybe instead, there could be a Darpa type agency in every branch of the human culture.

Because at Darpa, they take risks, risks that other sources of money do not take.

Well, the day you stop making mistakes is the day you can be sure you are not on the frontier, because you are doing things that are safe, things that you already know are going to work.

So I am just delighted that Darpa exists.

I like the fact that we got some robots that might take over some of our tasks, because there is too much work that I am doing that I don't want to do.

Let a robot take it over and maybe there will still be stuff humans can do, and if not, we just sit at the beach and enjoy our lives while robots take over the world.

I am cool with that.

Chuck, you are cool with that?

No.

That is a Cosmic Perspective.

I want to thank Sharon Weinberger, Hod Lipson, Chuck Nice, always.

I've been your host, Neil deGrasse Tyson.

As always, keep looking up!

See the full transcript

Unlock with Patreon

Unlock with Patreon

Become a Patron

Become a Patron