Where is humanity going, and what will we be like when we get there? Do we really have less than 15 years before computers match the intellectual and emotional capabilities of humans, and less than 30 years before artificial intelligence surpasses humanity? Join us for the Season 7 premiere of StarTalk Radio as Neil deGrasse Tyson examines futurist Ray Kurzweil’s predictions about “the Singularity” with the help of guest neuroscientist Dr. Gary Marcus and co-host Chuck Nice. Find out why Ray thinks that we’ll be able to directly link our neocortexes to the cloud, yielding an increase in brainpower the likes of which we haven’t seen since humans developed our frontal cortex millions of years ago. Ponder the possibilities of nanobot computers the size of blood cells, preloaded with information, that can enter our brains through capillaries and make us smarter. Throughout the episode, Prof. Marcus plays devil’s advocate, reminding us that Ray’s predictions are often at odds with mainstream projections of scientific and technological achievement. Finally, explore the potential benefits of advancements in biotechnology and find out about a company that is already using 3D printing to create human organs that are being “installed” into animals with some measure of success.

Transcript

DOWNLOAD SRT

Welcome to StarTalk, your place in the universe where science and pop culture collide. StarTalk begins right now. This is StarTalk, and I'm your host, Neil deGrasse Tyson, your personal astrophysicist. And I'm the director of New York City's Hayden...

Welcome to StarTalk, your place in the universe where science and pop culture collide.

StarTalk begins right now.

This is StarTalk, and I'm your host, Neil deGrasse Tyson, your personal astrophysicist.

And I'm the director of New York City's Hayden Planetarium, right at the American Museum of Natural History.

Today, my co-host, Chuck Nice.

What's happening, Neil?

Chuck, always good to have you.

Love you, man.

Love you, too.

Love you, man.

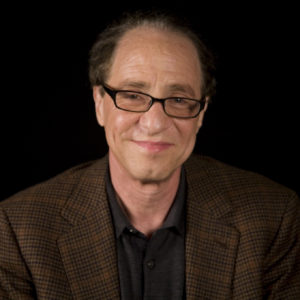

Today, we're featuring my interview with inventor, author, futurist, all around, sorry, crazy future guy, Ray Kurzweil.

And we'll be talking about artificial intelligence, of course, and where does that lead to discussions of the future of the human race?

And our guest today is neuroscientist, Dr.

Gary Marcus.

Gary, thanks for being on StarTalk.

Thanks for having me.

Oh, you, Vanna White here with, we got Chuck Holden up his book, The Future of the Brain.

Gary Marcus, so this is a, essays collected together, came out a couple years ago.

It came out a year and a half ago.

It's about to come out in paperback.

It has some Nobel Prize winners from a couple years ago, Christophe Koch from the Allen Brain Institute, all kinds of top people talking about the future of the brain.

How are we gonna figure it out?

When will we figure it out?

What will it be like?

And what is the future of the brain?

Yeah, how we doing?

How we doing with the brain?

The brain is working pretty well, but our projects to understand it are hard.

Well, you founded a company called Geometric Intelligence.

That's right.

That's kind of audacious.

You're bringing the math in there.

So what is Geometric Intelligence?

It's a machine learning company.

It's an AI company, basically.

You founded?

I founded and co-founded with some friends and we're inventing new algorithms, trying to learn more efficiently.

So you think about deep learning, people are talking about all the time.

That's all about big data or really big data and sometimes you can't get big data.

I have two little kids, they learn from small data.

So I want to have machines that can learn as efficiently as kids and not need.

Okay, so you're basing a machine, you're imagining a future where you can create learning, inspired by the way kids learn.

That's right.

Okay, that's good.

That's great.

Yeah, because don't do it by the way adults learn, because you'll fail.

Yeah, even worse the way the computers learn.

Computers are very inefficient, so they can learn to recognize white males asking search queries in quiet rooms, but then you have somebody else speak into these machines and they don't really recognize them.

I remember that news story.

That sounds like they've learned pretty well.

That's very specific.

It's a little too specific, that's part of the problem.

Kids are much more flexible.

That's the mystery of cognitive science, is why kids are more flexible than even the best machines that Google can put together.

Well, Ray Kurzweil, he's perhaps best known for popularizing the concept of the singularity.

I never forgave him for taking one of our words, singularity from black people.

Totally ripping you off, man.

It's like a hypothetical day.

Well, it's real to him and others who follow this line of thinking that around 2045, when artificial intelligence overtakes the human cognitive capacities, then the world will change irreversibly, like walking through a proscenium through which we can never return, where the machines replace the humans.

And he was the author of The Singularity is Near.

Does that sound like apocalyptic?

Why didn't he just name it Run for Your Life?

And he invented many things, Ray Kurzweil, he invented many things.

I got the list there, text scanning, music synthesizers, voice synthesizers, speech recognition technologies.

He was director of Google engineering in 2012.

He worked on the team developing machine intelligence.

He also received the National Medal of Technology under President Clinton.

It's one of the two highest honors that the White House gives.

One of them is the National Medal of Science.

And I was once on the committee to award that under President Bush.

So it's a very highly regarded tradition that we now have in the United States.

And he's author of seven books, five of which were best sellers.

He's got 20 honorary doctorates and honors from three presidents.

So the man, the man.

Yeah, he's done some things.

He's, he's, he.

Makes me feel bad.

I feel like I need a nap just hearing that.

So I just want to hear, let's go to my first clip of my interview with him and just find out what exactly does he predict here and now.

Let's find out.

Going back to a book I wrote in the 1990s, The Age of Spiritual Machines, I predicted that.

The Age of Spiritual Machines, okay.

By 2029, computers would have all of the intellectual and emotional capabilities of humans, and so they would be spiritual machines.

And conversely, we are spiritual machines because our brains are composed of 300 million modules that recognize patterns.

Each of those modules has 100 neurons.

Those neurons have ion channels and dendrites and axons, and basically you can describe based on physics.

So each neuron is a machine.

Very few people would disagree with that.

Well, then the 30 billion neurons in our neocortex is a machine also.

And actually we can understand it.

My most recent book, How to Create a Mind, talks about how that machine works.

It sounds diabolical, how to create a mind.

What's going on in your basement?

You can tell me, nobody's looking, tell me.

Well, we are understanding how human intelligence works.

I mean, these things are brain extenders already.

The smartphone that you point to on your hip.

Our brain is already expanded in the cloud, which is not a physics cloud, it's a computational cloud, but we're smarter as a result.

We'll directly connect our neocortex in the 2030s to the cloud and expand it.

The last time we did that was two million years ago, when we went from primates to humanoids and we got these large foreheads and we had the frontal cortex, which is where we do language and art and science and physics and radio shows.

No other species does that, but it's not qualitatively different.

The frontal cortex was just an additional quantity of neocortex.

The neocortex is organized in a hierarchy and we took that additional neocortex and put it at the top of the hierarchy and as you go up the hierarchy, things get more abstract.

So things like music and poetry exist at the top of the neocortical hierarchy and we're going to add to it again by connecting our neocortex to the cloud, to synthetic neocortex and expanding the way we did two million years ago, except this time it won't be limited by a fixed enclosure, we'll be able to expand it without limit.

Gary, can you summarize for me, what is the difference between high level computing and the human brain and how is it possible to close that gap, erase that gap entirely?

Well, I don't think we really know yet.

I mean, Ray is talking with a lot of precision.

Like he says, there's 300 million modules in the brain and he says that all these things are at the top of the hierarchy.

We don't even know what the number is to count.

So there's a lot of precision there that I think is maybe not justified.

Oh my goodness, wait a minute.

I just, you know, cause I'm a very polite person.

I'm a very polite person.

So I didn't catch that, a precision meant bullshit.

Oh, that was cool.

That was your award, not mine.

Just to be clear, speaking of excrement, Douglas Hofstadter, the Pulitzer Prize winning writer.

Escher Bach.

Yeah, good old Escher Bach.

I've loved his writings and I still do.

He was even harsher on it.

He said that, quote, I'm quoting here.

If you read Kurzweil's books, what I find is that it's a very bizarre mixture of ideas that are solid and good with ideas that are crazy.

It's as if you took a lot of very good food and some dog excrement, blended it all up so that you can't possibly figure out what's good or bad.

By the way, that's tonight's special at the Restaurant Ivy.

So maybe dog excrement is a milder form of bullshit.

I'm just wondering.

So please continue, go on, go on.

I don't think I can touch that.

All right, no.

I mean, I think, I listen to Kurzweil and I know the famous Hofstetter quote.

And I see a lot of stuff that's accurate and a lot of stuff where there's this false precision where he says it's gonna be exactly 2029.

And as a scientist, I know that we have confidence intervals around everything.

We know it could be in this range or this range.

There's some kind of statistical uncertainty.

Same thing with a number like 300 million.

And Kurzweil asserts these things as fact.

And then he asserts some things that actually are fact.

And he kind of-

But wait, he's got more inventions.

He's got more inventions than you.

He has way more inventions.

Okay, so just let the record show.

I'm just saying.

But could that not be because he's, you know, he's one, passionate, two, he's selling books, you know, and that sells books.

When you make-

He didn't start life saying, I wanna be a best seller.

I met the guy.

He's really an honest guy.

He's into this.

Right, right, he's for real about it.

He's not a manic cult leader where you look in their eyes and they wanna take over the world.

He's really just a regular guy who happens to be really smart.

Now, he goes beyond just what will computers do to match your human brain.

He wants to put nanobots in your brain, in your brain.

Let's find out where that goes.

Computers are getting smaller and smaller.

We'll have nanorobots at the size of blood cells that have computers in them.

They'll go into the brain through the capillaries and communicate with our neurons.

We already know how to do that.

People with Parkinson's disease already have computer connections into their brain.

So my view is we're going to become a hybrid, partly biological, partly non-biological.

However, the non-biological part is subject to what I call the law of accelerating returns.

It's gonna expand exponentially.

The cloud is expanding exponentially.

It's getting about twice as powerful every year.

Our biological thinking is relatively fixed.

I mean, there've been a few genetic changes in the last thousand years, but for the most part, it hasn't changed much.

And it's not gonna expand because we have this fixed skull that constrains it.

And it actually runs in a very slow subs rate that's a million times slower than electronic circuits.

But then why invoke the brain machine connection at that point?

Who cares?

You got the machine.

Because it's a much faster interface.

I mean, our fingers are very slow compared to, we could have millions of...

I didn't know the world was going too slowly for you.

You wanna speed it up.

Well, it is.

I mean, how long does it take you to read?

To read and write?

The brothers carry Mazov.

It takes, you know, months.

So you're suggesting that you can get these nanobots the size of your neurosynapses, let's say, and one will be preloaded with War and Peace, the novel, and would somehow inject it into your neurosynaptic memory banks, and then you're done.

You've got, just like in The Matrix, they would load memory programs into you.

Yeah, well, I mean, we will connect to neocortical hierarchies in the cloud.

Some of that could have preloaded knowledge.

We could communicate with each other more intimately that way.

But basically, we'll expand the size of our neocortex.

Gary, do you guys go there in your book?

We do, actually.

Christoph Koch and I, in the epilogue, talk about where neuroscience will be 50 years from now, and we think nanobots probably will be here.

As part of in the brain.

In the brain.

In the brain.

But at the same time, we think it's a lot harder than Ray might be suggesting.

So Ray is right that there are already neural interfaces where you can directly hook up to the brain and read some information, but then he makes the leap so you'll be able to do that in general.

Nobody knows how to do that, for example, with language.

So nobody knows how to read out a sentence from...

That never stopped him from making an accurate prediction.

He said, nobody knows how to make a faster computer, but at the rate we're going, it will be faster, and sure enough, it was faster.

So why is your argument an argument?

To the heart?

Sorry.

That sounded harsher than I intended.

There's exponential progress in hardware.

He's quite right about that.

No one would argue it.

It's not a law, so it's not guaranteed.

They call it Moore's Law, but it's a trend.

It's really Moore's Law.

But in software, it's not really the same.

So if you think about AGI, artificial general intelligence, we haven't made exponential progress there.

In chess, we've made exponential progress.

AI is basically like idiot savant.

We build some very narrow machine.

It gets better and better.

It's true for chess, true for a lot of things, but general intelligence, like we had Eliza in 1965.

I remember Eliza.

I had a conversation with Eliza.

Maybe even you believed it was real.

That's how old I am.

Back when you had to type, I said, Eliza, I'm not feeling well.

And Eliza would reply, why do you think you're not feeling well?

It would be very, so to turn the question back around, find the verbs and nouns and make it, and it would sound like you're on a shrink.

Yeah, I was going to say she could charge $75 an hour for that.

And now 55 years later, you have Siri, and people have the same kind of conversations with Siri, and Siri is just as fake as Eliza.

So Eliza didn't really understand what your problems were, even though Eliza asked you.

Are you telling me Siri doesn't know what I'm really saying?

I don't know what to believe anymore, man.

So we haven't made as much progress there.

So some things are exponential, and some are closer to linear.

And Ray is not really-

When I talk to Siri, I say, Siri, where's the nearest Starbucks?

It's going to find a coordinate grid.

It's going to get an address.

It's going to hand it to me.

Don't tell me it's not thinking.

You don't want to call it thinking.

But if Siri showed up-

It's narrow thinking.

40 years ago, your mind would explode, right?

But so here's a point that he made, sort of when I was just chilling with Ray.

It was that every time there's a new kind of AI, people say, oh, it's not really AI.

The real AI is that.

Whereas anything we're talking about would have blown away anyone's concept of AI decades ago.

So I think we have a moving baseline for how we're judging.

I mean, that's a familiar argument.

People say if it doesn't work, then it's AI.

When it finally works, it's just engineering.

And I think AI researchers have a right to complain about that.

But I still think if you're talking about general purpose AI, we just haven't made the progress.

So yeah, you can ask a few things.

Every time Apple rolls out a new OS, there's a new category of things you can ask.

Sports scores, Starbucks, and so forth.

But imagine if you have a kid.

I have a three-year-old kid.

Imagine if it was like every six months he rolled out a new conversational topic and really stuck to three others.

I would take him to a speech pathologist or a neurologist.

I'd be really worried.

Well, maybe some of it is just it's missing the emotion.

So what I really wanted to know is, how does Ray get his precision in how he's calculating when the singularity happens, when we become slaves to robots?

That's what I really wanted to know.

He told me.

There's one thing that's surprisingly predictable, which is the pace of the exponential growth of information technology.

The price performance and capacity of information technologies like computation, communication, now biological technologies like sequencing and also simulating biological processes, the amount of data we're getting on the brain, many different measures, not of everything, but of information technology, follow amazingly precise trajectories, and they're exponential.

So I started with the common wisdom that you cannot predict the future, and I made a surprising discovery.

Lots of things are unpredictable, but if you look at the price performance and capacity of information technology, it follows an amazingly predictable trajectory, going back to the 1890 American census.

And one of the reasons you might be skeptical is it's not intuitive.

Our intuition is linear, and a linear progression, that's our intuition, goes one, two, three.

An exponential one, that's the reality of information technology is one, two, four.

It doesn't sound that different, except by the time you get to step 30, the linear progression, our intuition, is at 30, the exponential one is at a billion.

So you're right on the curve as time goes on.

This gives you confidence in your modeling.

And so now you say 2029, what happens?

So in 2029, we'll be able to match you in intelligence in the computer.

As we go through the 2030s, we'll be able to multiply our intelligence some number fold.

You get to 2045, and according to my calculations, we'll be able to multiply our capacity for thinking a billion fold.

That's such a profound transformation.

It's such a singular transformation that we borrow this term from you guys.

Thank you very much.

That's really all I wanted out of it, was to thank the astrophysicist for handing you this term.

It actually came from, you borrowed it from math, and it's an infinite level.

But the most significant thing about a black hole in physics and Singularity in physics is the event horizon.

Going past the event horizon is a little mysterious, and so there's a certain amount of mystery about going beyond the event horizon.

And that's the same, that's really the metaphor.

Going past that event horizon is hard to predict because it's so transformative.

So Gary, this precise talk, it's very apocalyptic, and it's influenced people in exactly the way cult leaders influence people, saying the world, the end of the world is near.

Well, Ray takes a positive view of it.

The beginning of the world is near.

He thinks it's nerd rapture, we're all gonna ascend.

Yes, just the nerds.

Yes, exactly.

So, I mean-

Give me an honorary nerd with us because you do the show with me.

Well, I appreciate that because I'm ready to go.

We'll all want to ascend when we get the chance, I guess.

But again, there's the kind of false precision.

So, and first of all, Moore's Law goes back to before Ray was on the scene talking about these things.

It's been around for a long time.

But second of all, you can get seduced.

You said since the 1890 census, information.

There's data going back there.

Oh, data.

I mean, question about where the idea came from.

But anyway, you could look at something like cloning.

And if you were in 1995, you'd say, it's gonna be here tomorrow.

We've got Dolly and then the next thing you know, it's not really a practical technique.

All the clones died early and so forth.

We'll do more of that when we come back from the break.

But also we're gonna find out what Ray Kurzweil thought of the movie Transcendence and what he has to say about the existential risks associated with AI.

I'm not sure I'm doing this right.

I'm not sure'm doing this right.

I'm not sure'm doing this right.

I'm sure'm doing this right.

I'm sure'm doing this right.

I'm sure'm doing this right.'m sure I'm doing this right.

I'm with Chuck Nice, co-host, professional comedian.

Leading at Chuck Nice comic.

I love it when.

I do get paid for this.

I'm stealing money.

Okay.

I am stealing.

Well, not from you.

That's the one that had Johnny Depp.

Oh, yes.

Oh, is that right?

Yeah, which was the Keanu Reeves version of Transcendence.

All right, all right.

You analyzing these way on a level that I.

I don't know, it's so weird that I don't have a life.

What?

So I brought up the movie just to get his reaction to it.

Let's find out where he goes.

In the film Transcendence, that's what I imagined your scenario would describe.

Just for people to remember, in Transcendence, Johnny Depp, the lead character, lifts his mind into the network of the world's computers and that becomes his life and his body's gone at that point.

And it becomes dangerous.

And that's a typical dystopian view of these technologies.

I mean, that's not how these things happen.

Did mobile phones or the web or the cloud just leap overnight?

It starts out at a point where it doesn't really work and it's kind of very flaky and people dismiss it because that doesn't really work.

By the time it works, it's been around for a long time and we'll get used to it.

Okay, so let it be that, but now let's assume we get to that point.

Is that a natural progression of the scenario that you have predicted?

I mean, I think that's part of the human experience.

We're already a human technological society.

Yeah, but in that case, he's not even a body.

He has an existence in neurosynaptic.

Yeah, well, we are gonna be able to project our minds into virtual environments.

We're already starting with virtual reality that's a little crude today, but it'll become very realistic.

It'll ultimately actually go inside the nervous system so that we could, a couple could come.

They'd be like Total Recall, in Total Recall, your vacation was just what they embedded in your mind.

Couple could become each other.

You can inhabit fantastic virtual environments.

You can have a different body in a virtual environment.

So we're already separating physical reality from its consequences, and we're gonna increasingly do that.

So what, so Gary, where do you brain people take all this?

Where are you going with this, or not?

I had a friend who said that all neuroscientists are closet upload people, which is to say that all neuroscientists want to achieve immortality by uploading their brains to the web.

But none of the neuroscientists, Yes, we knew that.

None of the neuroscientists I know really want to do that.

All the neuroscientists I know think it's wildly unrealistic.

It's like 50, 100, 200 years away.

Now, wait a minute, because when you think about it, are we without these little electronic impulses inside of us, these little synaptic jumps of electricity between nerve endings, you know, outside of that, that really determines everything that we think and touch.

And so if we can take that and recreate it artificially.

Why is that 200 years away?

Why wouldn't that be the same as being who we are right here?

Just I'm feeling this, but I'm just not actually doing it physically.

I gave you a confidence interval.

I said 50, 100, 200 years away.

I mean, I think it's somewhere in between.

I do think it's possible to upload brains.

I mean, if your brain is uploaded, that's a copy of you, not you.

So those are really weasely error bars there.

Well, it's not.

Somewhere between 50 and 1,000 years from now.

Actually, I wrote a letter to the Times, actually.

It's like, we'll be there, so in between and 5 p.m.

Tuesday and Friday.

It is like cable, that's the point.

It's like waiting for the cable guy.

We don't know exactly when he's gonna come.

I'm waiting for the cable guy.

We go around saying he's gonna come at four o'clock.

We're misleading people, that's the point.

But you must agree that if it goes the way he describes, there's tremendous utility to this.

You agree?

Well, I mean, it makes a copy of you and it depends on how narcissistic you are for whether you want a lot of copies of you floating around in the cloud.

It's not actually gonna make you immortal.

Okay, but fine, but let there be copies.

But I'm also talking about, there are a lot of brain disorders that brain people study.

If we can map your brain, can't we find out where stuff goes wrong?

That's one of the things we talk about in the epilogue to this book, The Future of the Brain, is the difference between, for example, if you have a brain injury, maybe I can make a backup of your brain before the injury, do regular backups of your brain.

I'll be able to restore the backup.

Like a time machine for the brain.

That's pretty cool, but on the other hand, a congenital disorder.

It would be an Apple product that you just plugged there, by the way.

I wanna cut.

You know, I just realized that I did that.

I was like, oh, like everybody knows Apple time machine is your backup.

Time machine pre-exist as a thing.

Right.

It's gonna be some problems with the ethernet.

It's not always gonna work right.

Sometimes it's gonna restore the wrong version, but at least it's possible in principle.

On the other hand, like if you have a congenital disorder, maybe we won't know how to fix that because we don't understand enough about how the brain works.

So even if you didn't understand the operation of the brain, if you simulated every molecule, et cetera, then maybe you could at least do a restore.

And maybe that'll be one of the first applications.

Is this the first step to immortality?

It's the immortality of a copy of you.

You know the Woody Allen line about preferring to live on in his apartment rather than through his work.

I mean, it's another way of living on through your work.

That's what I'm saying, but it's a pretty cool copy of you because you're like a Sim now.

So you can upload yourself.

Your own avatar.

Your own avatar.

I can upload myself and I'm still there if you're able to actually upload the content.

You'll be able to do roughly that long before then with VR.

I mean, you won't be able to do it at the neuron by neuron level, but you'll be able to make a pretty faithful avatar.

It'll get all the motion caption stuff, get your voice right and so forth.

That's actually scary, because you know there's gonna be some guy who's gonna make an avatar of himself as a girl and then go into virtual reality chat rooms and screw all the guys.

That's already happening.

Oh, right, yeah, isn't that kind of already happening?

Is that happening?

Plus, why do you know so much detail about that that can happen?

I have an active imagination.

Well, okay, so whether it's AI taking over us or we controlling AI for evil, nefarious purposes, there's no doubt that there are existential threats that this technology can bring.

And I asked Ray, of course, I had to ask Ray about this, check it out.

Technology's been a double-edged sword ever since it's ever been.

Fire, I mean, fire kept us warm, but burned down our houses, and every technology can be used for creative and destructive purposes.

We have an actually a new technology that has an existential risk already, which is biotechnology.

The existential risk from artificial intelligence or nanotechnology is off in the future.

And we can debate, is it 10 years away or 50 years away, but it's not here yet.

But the ability for someone to take a benign virus, like a cold virus, and turn it into a super weapon that make it more deadly, more communicable, more stealthy exists right now.

That could be done in a biotechnology lab probably a few blocks from here.

So that was recognized actually 30 years ago.

I had a conference called the Asilomar Conference to come up with guidelines, how can we keep this safe and reap the promise without the peril.

And they came up with the Asilomar guidelines.

Those have been made more sophisticated over time.

And they've worked very well.

We're now reaping the benefits of biotechnology.

The number of incidents, either intentional or accidental, where there's been harm from biotechnology so far is zero.

It's zero.

Now that doesn't mean we can cross it off our worry list because the technology keeps getting more sophisticated.

But nonetheless, it's actually a good model for how to keep these technologies safe.

Well, plus, you know, when we know that fire exists, and so we have fire codes, right?

This is how you build a stairwell, and this is how you escape.

And this is how...

And we have a moral imperative to use fire or artificial intelligence or biotechnology to overcome the problems, you know, that humans have.

There's still a lot of human suffering.

And we're using AI to diagnose disease and come up with new cures and clean up the environment to reduce poverty.

And we have a moral imperative to continue that way while we, you know, have ethical guidelines to keep the technologies as safe as possible.

So Gary, you're quite prolific on this topic in the popular media, even not only professionally.

So one of your articles for The New Yorker is why we should think about the threat of artificial intelligence.

So this sounds very Luddite, Ludditic.

I'm no Luddite.

In fact, I'm just launching an organization called aiforgood.org, which is about what positive outcomes we can get from AI.

But there are also risks too, and it's a trade-off, right?

But are ethical guidelines enough to just guide this?

Because we have ethical guidelines for everything else that could possibly kill us.

We probably need regulation too, I mean.

Yeah, regulation, but we do that.

We don't say let's not have airplanes because they could crash.

We have regulations to make them as safe as possible.

And they still crash, but we accept that risk.

So.

That's right, and I mean, we do some kind of calculus to decide whether it's worth it.

And maybe that 200 years from now, people look at us and like, why did they use cars before they had computers in them to make them safe?

They lost so many people.

And so people may look back at us now and say, the ways in which we handled AI in 2150 in their early days were really pretty poor.

And so I don't know what the regulations are gonna be.

It's probably gonna be iterative.

One of the things that I think we all worry about is that the pace could be fast and we don't have enough time to take care of it.

Do you share the total concern that the famous Trinity of Elon Musk, Bill Gates and Stephen Hawking have shared?

No, I have a milder view.

I mean, I think that real strong AI is-

Just to be clear, they're freaking out.

Right, they think basically machines are gonna take over and kill us all.

They kill us all and then the future of the world is a world of machines.

Right, a world of machines.

Right, right.

I don't think it's right.

The terminator.

The machines, they're coming, okay.

The honest truth is that Skynet is not gonna be here tomorrow.

They have an Austrian accent.

All the machines will have an Austrian accent.

Look at this, I'm going to kill you.

I'm sorry, go ahead.

I don't think that the Schwarzenegger version of it's gonna be here anytime soon.

I don't think the computers care about us so far.

You get computers that are exponentially smarter at playing chess and they don't give a shit about us at all.

I don't know if I can say that on the air.

But at the same time, I still think we need to be worried.

As computers get more and more embedded in our lives, they have more and more power to change things.

So they're gonna start driving our cars, for example.

And if the AI isn't right, then there's a risk there.

So it's really not about them taking over.

It's about the risk of failure.

Like, I see the real risk is, okay, all the cars are being driven by computers, and then all of a sudden we have some kind of magnetic pulse, and boom, everybody crashes all at once.

More than they're just gonna be like, yo man, I'm not taking you where you wanna go.

Exactly.

I don't care where you wanna go, I'm tired.

An ornery car, just like, right.

That's right, I would not worry about the ornery car, but I worry about security and programmability.

So if you've ever programmed anything, it doesn't always work.

Of course, security, programmability, we got that.

But what of this quote from Nick Bustrom?

I'm extremely familiar with his writings, and he says here that the creation of AI with human level intelligence will be followed immediately by an almost omnipotent super intelligence with the consequences that would be disastrous.

We've had human level intelligence without omnipotence for a long time.

I mean, the word immediate is particularly perplexing.

I put immediate in here probably.

Sorry, that's my word in here.

Followed by, followed soon after, yeah.

And nobody knows how soon, nobody really knows what's gonna happen.

But I think it's worth spending.

I could just unplug the damn machine.

Yeah, I was gonna say, like, you know, people think about that.

Where's my 30 on six?

That's the end of the machine like that.

How about that?

One, it's one, uh.

Look, we shoot each other for less.

We have a tremendous capacity for violence.

That's what these machines don't, they don't know who they are screwing with, man.

Are you kidding me?

I will bust you.

Bust a cap in your silicon wafers.

So, wait, to get back to my interview with Ray Kurzweil, I had to ask him what did he think was the most realistic AI film?

Just, he thinks about this, we all think about it.

Let me get like the experts view on that.

Let's check it out.

Of all the films that project sort of AI in the future, which would you say was the most realistic?

Well, I liked her because there wasn't one AI.

Like everybody had their OS, their operating system, and Theodore's human girlfriend also had a relationship with her operating system.

So that's more realistic.

I thought it was unrealistic that Samantha didn't have a body because I think it would actually be easier to provide her with a virtual body than to provide her with her human level mind.

So by the time we have Samantha's mind, we'll have virtual reality and she'll have a body.

And then the sex market will completely drive that.

I mean, the sex market does drive that.

It drives the bandwidth today on the internet.

Isn't it half the bandwidth of the internet?

It's porn.

Videotape and even books.

Gutenberg's first book was the Bible, but then there followed a century of more purient material.

So in the future of the brain, we should not be surprised that sex drives decision making.

It's not going to change.

So in another StarTalk, we interviewed Dan Savage, and he made it very clear, something we all knew, but I hadn't quite put it that way, that sex predates humans by half a billion years.

It's kind of the most powerful force on the planet.

It's a force of nature unto itself.

So how much of that is driving what the neurosynapses are in the first place, plus what we'll do with the power over the brain in the second place?

Well, I think all of evolution is shaped by sex in part.

I mean, there's no question about that.

I think that in terms of, are we going to have sex spots and things like that, for sure?

And are we going to have...

Chuck, Chuck goes double thumbs up on that one.

Oh, my God, yes.

It's actually an interesting question, like, what's going to happen to society as sex spots become, you know, more and more authentic in some way?

Fine with me, as long as they program them not to get jealous, because I plan on having three.

But wait, but here's...

This is a polyamorous roboticist.

Okay.

Is that a sentence?

But I can tell you this.

Whatever goes on, you don't want to catch a computer virus.

That's the worst kind.

You don't want these things to cross worlds.

That's what I'm saying.

You cross species.

I just fixed the gonorrhea.

Now I got a computer virus I got to deal with.

So, but I don't know if it's a centerpiece of your conversations or not.

I mean, I look at efforts that are going on here.

In my notes, there's a campaign launched in 2015 for a ban on the development of sex robots.

I think that's silly.

That's silly.

Okay.

We should get rid of all the vibrators too.

Like why?

They're called vibrators.

I was gonna say, we just don't have them for men, so why are they trying to shut us out?

I mean, come on, equal time, people, equal time.

And there's a company, I got a true companion, a company, which has designed what they call the first sex robot called Roxy.

Roxy with three Xs, triple X Roxy.

Yeah, it's got three Xs, of course.

Look at that, that's so clever.

Of course, a male sex robot also in development.

That totally useless male sex robot, completely useless.

Every man is a male sex robot.

We are programmed to want sex and to have it no matter what, and you will never have to beg us for it.

Okay, suppose you program a sex drive into AI, because then that's just making it more human.

That would be honest, right?

Well, I guess there's a question about what it means to program a sex drive into an AI.

Like, do you mean you're going to program the robot to have sex with people or to actually want it?

I don't want it.

I don't want it.

If you want to be a real person, you program it to a robot.

Probably nobody's going to bother with that.

It's easier just to program it to do certain actions and so forth.

I don't know if you need that level of conscious reflection.

That's what's interesting about her, though.

Like or one, see, you got it.

That's it, because here's the thing.

Otherwise, you're just going through the motions, and that's called marriage.

Nobody needs that.

No, no, there's a subtle distinction here.

There's a subtle distinction.

So one of them is it likes it if it happens to it.

But if it wants it, then it could be waiting for you on the street corner.

Yeah, you're right.

You know what?

Talking about your tight ass.

I will not be ignored.

I'm still here.

I think I've calibrated the language now.

The real lesson of the Turing test, the famous Turing test, is that people are very easily fooled.

You don't have to go to great lengths in order to fool a person that a machine is taking interest in them and so forth.

And so it's not a growth industry in that growth industry to solve these finer points.

You can get away with easier stuff.

And of course the movie Ex Machina was basically how to use sex as a force in anything that happened in the storytelling.

He taught her too well.

You saw that movie.

He taught her far too well.

Taught her real good.

Spoiler alert.

More of my interview with Ray Kurzweil when StarTalk returns.

You Welcome back to StarTalk.

I'm with my co-host, Chuck Nice, and our guest, neuroscientist, Gary Marcus.

And this is a tweeting neuroscientist, apparently.

You're tweeting at Gary Marcus, M-A-R-C-U-S.

So, now I know you're out there.

I'm gonna find out what you gotta say in the Twitterverse.

We're featuring my interview with the inventor, futurist, all-around deep-thinking dude, Ray Kurzweil.

And so far, we've been talking about AI and robots, but I also brought up the topic of 3D printing during that interview.

And what he was, he thinks about where that technology is headed, because if you can 3D print stuff, maybe one day we can 3D print organs or a physiological form.

Let's just find out where he thinks this will go, check it out.

Cool.

What's the distant future of 3D printing?

Are we gonna be able to one day just print a human being and then...

We're in the hype phase now, kind of like where the internet was in the 1990s, if you remember, if you had the URL dog.com, you were a billionaire and then the year 2000, people realized, wait a second, you can't make money on these internet companies.

When we had the internet crash, it almost took down the world economy.

And now we actually do have internet companies like Google and Apple and Microsoft that are worth hundreds of billions of dollars.

It's gonna be a similar story with 3D printing.

Right now we're in the hype phase.

There's interesting niche applications.

The exciting applications, I believe, will be in the early 2020s.

You'll be able to print out clothing.

We need some micron resolutions.

We'll be there then.

You'll have millions of free cool designs that are open source.

You can download for free and print out for pennies per pound.

You'll also have a proprietary market.

People are already, I'm involved with a company where we're printing out organs, human organs, and successfully installing them with some limitations in animal models.

Within 10 years, I believe, you'll be able to print out human organs.

Okay, Frankenstein.

Well, you know, if you...

You just say that so casually.

If you need a new lung.

Can you print a brain?

Is that in the future?

You probably don't wanna do that because I think it makes more sense to emulate thinking non-biologically because that's really what we're working on.

But if you need a new kidney or a new lung, you'll be very happy to be able to print it out on a 3D printer.

Ultimately, we'll be able to print out most of the physical things we need as we go through the 2020s and it will revolutionize manufacturing.

Gary, you wrote an article for The New Yorker on growing brain parts.

That's the equivalent perhaps to 3D printing a body part.

Where did you land on that?

I mean, it's something that's gonna happen here.

I agree with Ray.

The only differences I have with Ray are usually about dates.

I mean, he says these things with certitude, it's gonna be in the 2020s.

And we don't know because there's all kinds of complications about infection and how to get the parts right.

Every clip we've played for you, you say, yeah, I agree with him there.

But we began with you like totally saying he had, you know.

No, I disagreed on the neuroscience.

I disagree on the structure of the mind and I disagree on the dates.

I do agree we're gonna be able to print brain parts and other body parts.

I mean, that's already happening.

It's not to production level stuff that you can ship, but.

Yeah, just go to the local hardware store.

It's gonna be in vending machines.

It will.

Look at that, lost an ear somehow.

Mike Tyson bit off my ear.

You have to put in a drop of blood so they can customize it to you or something like that.

But yeah, I think that will happen someday.

Not as soon as Ray thinks, but sure.

I mean, the real question is not when is this gonna happen?

What's the world gonna be like after all these things do happen?

I think maybe it takes 50 years for things he says take 20 years, but the world is gonna be different.

So what are some of the unintended consequences of these advancements?

Because this is the thing that kills me about all of these technological forays that we make is that we go there and then we go, oh, wait a minute, I didn't realize that this was gonna happen.

You did what?

Right, exactly.

My favorite line, I forgot where I got this, it was the last words ever spoken by humans ever is, let's try the experiment the other way.

That's it, all of a sudden.

We don't want those to be our last words.

That was the end of the world.

The end of the world.

Let's wire it the other way and see what happens.

We don't want oops to be our last word.

I think that's right.

And so, I mean, I think a lot of the things that are on the table in discussion now are science fiction, they're not gonna happen, but we don't know which ones are science fiction, which ones we need to watch out for, and so I do think it's worth investing real money.

I got some data here, what was it?

A printed body parts brought in nearly half a billion dollars in 2014, up 30% from the previous year, according to an article in Nature News in April 2015.

It's already a thing.

Yeah, I mean, not printing brain parts.

There's an old joke about brain transplants are the one thing where you wanna be the donor rather than the recipient, and that's, you know, a little bit more complicated, right?

And let me tell you something, I tell you where you can go get your money for that is the NFL.

They will gladly fund that research.

They are, the NFL is, you know, funding what?

They just play NFL with head bucking, and then when you get out, you just get a new brain.

A new brain, right.

Yeah, there you go.

Well, it's more like people want to figure out how to repair your brain after a concussion.

I mean, that is, in fact, a big industry, and people are making progress on it.

Well, and repairing the brain, right.

Why get a new brain if you can just go in there and repair it?

Yeah, yeah, it's just a few neurosynapses that have been.

Well, you want to keep your ideas, your personality, your memories, and so forth.

I got that in the chip.

So, you know, get with the program here, all right?

We already got that on my USB chip.

That's where Ray is confused about reality and possible future.

So, I know from NASA, I hosted a panel with some NASA representatives where they're printing machine parts that you might not be able to get at the local hardware store when you're on Mars, right?

On a long trip, and you got to make a bolt or a fan blade or something.

You just print it, and those, of course, are just sort of dumb instruments.

They don't have the complexity of an organ, a human organ, but I can imagine.

That's what Damon should have said in The Martian.

I'm gonna 3D print the shit out of this.

So in the future, maybe it's not about sending a medical doctor to help your body repair its own wounds.

It's about sending...

Hewlett-Packard.

Hewlett-Packard is very big in 3D printing.

A machine, and you just replace stuff that breaks.

And kind of the way they did in Star Wars, Luke's hand.

They just, you know, you pick up the next scene, test in the fingers, and he's good to go.

Right.

I think that will happen.

Not even a thing.

So you don't fear the future of 3D printing.

That's not a scary plight for you.

The version of it that does scare me a bit is 3D bioprinting, which is also, I mean, we were talking about a piece of it now.

People are going to be able to 3D print viruses and things like that.

I worry about what we call them unpleasant actors, to put it diplomatically, trying to do those kinds of things.

I asked Ray, as our conversation drew to a close and all of this stuff is on the table, I had to ask him, what are the best and the worst possible outcomes?

Let's see where he goes.

The best and I think one that we are actually following, although people don't necessarily agree with this, is that life is getting better.

We will, I believe, by mid-late 2020s eliminate poverty.

As we get to 2030s, we'll eliminate disease.

We're going to dramatically expand human life expectancy.

People say, we're going to run out of energy and other resources.

We have 10,000 times more sunlight than we need for free to meet all of our energy needs.

And there's lots of other resources.

We have lots of water, but it's dirty or salinated.

But if you have inexpensive energy, we know how to clean up water.

Vertical agriculture, where we grow food in vertical buildings controlled by artificial intelligence will produce very inexpensive, high-quality food, hydroponic plants.

Which is already really happening.

We have inexpensive, high-calorie food compared to 50 years ago.

Absolutely.

But basically, it's moving towards greater prosperity.

So what's the downside?

The downside is, I mean, there's lots of movies about the downside.

I don't need you to tell me about what the movies do.

The AI is trying to destroy humanity, and it's the humans against the artificial intelligence.

So the robots are two groups of humans fighting for control of the AI.

One thing that gives me comfort is we don't have one or two AIs in the world.

We have two or three billion AIs.

And a smart phone is an artificial intelligence.

It's in fact very intelligent, and it accesses the cloud and makes it even more intelligent.

And it's in billions of hands.

So Gary, what are your reflective thoughts on this as we wind down here?

I think the thing that he left out is income inequality.

So I think that it's certainly the case that these new technologies, other things being equal, are going to make a more productive world.

There's going to be more resources tapped more efficiently.

But what he leaves out is that the data is very concentrated in a few players.

And so are some of the best AI techniques, patents and things like that, are locked up in a small number of companies.

That's an early problem that possibly could be resolved later, because so many people have smartphones now, and even poor people have smartphones, right?

It could be, but I think there's a whole issue about employment we didn't talk about today.

That's a homeless guy.

Call me.

Cell phones are going to become essentially free.

But jobs are going to become scarce, and that's an issue that Ray didn't touch on.

And then the other issue is what is the real probability of some risky scenario where the machines turn us into paper clips?

I think it's very small, but it's probably not zero, and it behooves us as a species to make sure it comes as close to zero as we can possibly make it.

Okay, that's a rosy outlook.

Chuck, you got any?

We're good to go.

Listen, you guys had me at Sex Robot, and so I'm good.

This is all headed as far as I'm concerned.

In the right direction.

It will go to Sex Robot, and everything will go from there.

It will branch out.

We will never see you again.

Chuck, anybody saw Chuck?

Oh my God, I wish that wasn't true.

Well, I went in as his biggest skeptic, because I, you know, don't tell me that the world is going to be...

You know, everyone always says the world is going to be different because of something they thought up.

And I do a lot of the reading of history.

So does he, of course.

And when I finally confronted him, just to see how sort of sane he was and how straight he was, and when he makes a prediction, it's based on a calculation.

It's not just some dreamy stuff he thought up in an armchair, you know, in his lazy boy armchair.

And so I gained a level of respect that I didn't know was possible within me to grant to him.

And so whether or not he's right, I mean, futurists don't have to always be right.

If you write one out of five times, that's an awesome track record.

And I think he's right a little more than one out of five times.

Plus it's a coin, and you're flipping, and one of the two would be better.

Yeah, that's true.

But if you have many, many, many predictions, and you see how they play out.

There's actually interesting stuff on Wikipedia that talks about his track record.

People are interested in the data.

Okay, excellent, excellent.

But I'd say I'm going to lean his way more than I was before.

And I'm glad somebody's least thinking about it, because I certainly wasn't before I chatted with him.

You've been listening to StarTalk Radio.

Gary, thanks for being on the show.

The Future of the Brain.

I'm going to pick one of these up and find out where you guys are coming from.

And Chuck, this might be the last show we have of you, because you'll be sex-botting for all the future time.

So that's our show.

As always, I've been your personal astrophysicist.

And as always, I bid you to keep looking up.

This has been StarTalk.

See the full transcript

Unlock with Patreon

Unlock with Patreon

Become a Patron

Become a Patron